Open-Source Internship opportunity by OpenGenus for programmers. Apply now.

In terms of compiler optimization, classical compilers used a method called Peephole Optimization, which is a powerful optimization approach. During the early stages of implementation, this approach was mostly used with string pattern matching on regular expressions, which are known as classic peephole optimizers. The string pattern matching technique is considered as a processing of the input of the syntax of assembly code in classical optimizers, but its meaning is lost. This article analyses prior research and current research concerns in the context of 'optimizing' compilers through the implementation of peephole optimization applying distinctive pattern matching approaches that focus on regular expression. It aims to provide a pattern matching technique based on a rule application strategy to optimize assembly code syntax for a given machine or retargetable (machine independent) in the context of structured programming, procedure-oriented programming, and object-oriented programming.

Table of contents:

- Introduction to compiler optimizations

- Common Techniques Applied in Peephole Optimization

- Prior Research

- Machine Specific Peephole Optimizers

- Machine Independent Peephole Optimizers

- Combining Optimization with Code Generation

- Optimization Rules (Pattern) Matching Strategies

- Research Issues

- Conclusion

Prerequisite: Different Code Optimizations in Compiler Design

Introduction to compiler optimizations

In compiler optimization theory, compiler optimization refers primarily to the program optimization to improve execution efficiency. Program optimization refers to three aspects (i) front-end: a programming language code,(ii) intermediate code: an assembly language code generated by the compiler appropriate to the programming language and (iii) back-end: the specific machine or object code generated from the assembly language code for the actual execution by the compiler. The Peephole Optimization is a kind of optimization technique performed over a very small set of instructions in a segment of generated assembly code (Intermediate code). The set of instructions is called a "peephole" or a "window". It operates by identifying groups of instructions that can be replaced with shorter or quicker sets of instructions to improve the speed or performance of instruction sequence execution. Basically Peephole Optimization is a method which consists of a local investigation of the generated object code means intermediate assembly code to recognize and replace inefficient sequence of instructions to achieve optimality in targeted machine code in context of execution or response time, performance of the algorithm and memory or other resources usage by the program.

Common Techniques Applied in Peephole Optimization

-

Constant folding - Constant sub-expressions should be evaluated ahead of time.

E.g. r2 := 3 x 2 becomes r2 := 6 -

Strength reduction - Faster operations will be replaced with slower ones.

E.g. (a) r1:= r2 X 2 becomes r1 := r2 + r2 then r1 := r2<<1

(b) r1 := r2/2 becomes r1 := r2>>1 -

Null sequences – Operations that are ineffective will be removed.

E.g. r1 := r1 + 0 or r1 := r1 X 1 has no effect -

Combine operations - Replacement of the few operations with similar effective single operation.

E.g. If r2 := r1 X 2, then r3 := r2 X 1 becomes r3 := r1 + r1 -

Algebraic laws - Simplification and reordering of the instructions using algebraic laws.

E.g. If r1 := r2, then r3 := r1 becomes r3 := r2 -

Special case instructions - Use instructions designed for special operand cases.

E.g. r1 := r1 + 1 becomes inc r1 -

Address mode operations - Simplification of the code using address modes.

E.g. r2 := var becomes r2 := 0x500

Prior Research

Machine Specific Peephole Optimizers

According to William McKeeman, Peephole optimization is a straightforward yet powerful optimization method. It was noted that when a program gets compiled, the code emitted from the code generators contained many redundancies around borders of basic blocks like chains of jump instructions. It becomes complicated case analysis to reduce these redundancies during the code generation phase. As a result, it was necessary to assign a distinct phase to deal with them. The following was the description of the concept:

A peephole, a tiny window consisting of no more than two assembly code instructions, is passed across the code. When redundant instructions are discovered in the sequence, they are changed with shorter or quicker instruction sequences. The peephole optimizer does this by employing basic handwritten pattern rules. These are initially compared to the assembly instructions to determine their suitability for testing, and if a match is established, the instructions are replaced.

The efficiency and limitations of the optimizer appear to be mostly determined by the amount of time and space available for identifying redundant sequences of instructions.

Example:

Sample Code:

X: = Y; Z: = Z + X;

Compiled Code:

LDA Y - Load the accumulator from Y

STA X - Store the accumulator in X

LDA X - Load the accumulator from X

ADD Z - Add the content of Z

STA Z - Store the accumulator in Z

In the above given simple code, if the store instruction is nondestructive, the third instruction is redundant and may be discarded.

Lamb's Peephole Optimizers were published in 1981. The code generator generates assembly code in the form of a doubly-linked list of nodes. The optimizer uses a compiled version of the patterns, which is then simplified for testing applicability for set of instruction sequences. The form of the optimization rules:

< Input pattern line 1 [condition (var)] >

………

< Input pattern line n >

=

< Replacement pattern line 1 >

………

< Replacement pattern line n >

Condition (var) is optional in the above-mentioned pattern matching rule (and hence expressed with square brackets); it can only appear in the matching section of the pattern rule. In an optimization rule a condition over some variable(s) occurring in the pattern is referred to as an Escape by Lamb that must be met. If all the instructions in the preconditions part can be applied to adjacent assembly code instructions with any particular conditions over variables evaluating to true then and only then the pattern match is successful. Escapes can also be embedded, where they represent an additional action to be performed rather than describing a condition over the variables as in the matching part. McKeeman originally adopted the other approach, until more improvement can be made, one would have to go through the code several times. Since the code has to be tested once only, the backward strategy is surely better and effective. The above discussed style of matching optimization pattern rule and backward strategy are also used by Fraser's peephole optimizer Copt through being a retargetable optimizer.

The work discussed in this article adopts the above mentioned style of pattern matching rule for the regular expression and the generic backward strategy, which is very applicable to classical peephole optimizer implementation.

Machine Independent Peephole Optimizers

The classical peephole optimizers were machine dependent and so they could only specific to a single machine. Retargetable optimizers are different then the classical ones in their capability of being machine independent and therefore easily retargeted to other machines. PO generally takes two inputs, one is assembly code of the program and the second is the target machine's descriptions on which the program suppose to be executed proceeding as follows. Ralph E. Jonson and Carl McConnell have defined the RTL System using the technique of register transfers as an intermediate code for compilers. Their work provides an object-oriented framework for optimizing compilers to implement peephole optimizers.

In their work, Tanenbaum et al. certainly gets the advantage of having optimizations at the intermediate code level in the case like flags and register allocation issues do not need to be considered yet. HOP is being adapted for the use in the Portable Standard Lisp compiler. In order to perform a match of a sequence of input instructions with pattern rules, the input instructions and pattern rules are stored in separate hash tables. If the match is successful, the context sensitive information of the input is analyzed for consistency with the optimization rule. Using this way, HOP stay away from dealing with strings and enhances matching speed to that of byte-to-byte comparison.

An optimizer tool described by Whitfield and Soffa in 1991 that is basically using an optimization specification language and an optimizer generator. Davidson and Fraser has enhanced the functionalities of the original version of PO in 1984 added with the CSE. Ganapathi et al., has given a totally different approach of peephole optimization in compiler construction. For describing target machine instructions they utilize attribute grammar parsing techniques instead of using register transfers.

Combining Optimization with Code Generation

From review of the above research works we can understand that the retargetablity and platform independency of the peephole optimizers in compiler construction had been successfully achieved but speed is always remained an issue.In order to overcome this, the overall execution time for the code generation and optimization phases had to be reduced. As mentioned at the beginning of this section, allowing for optimization in code generation itself would have resulted in complicated case analysis. So the goal here was to maintain the efficiency of both phases without focusing the individual algorithms too much. The concept of combining two phases of code generation and optimization in to one phase has been described by Fraser and Wendt in 1986. Using this concept they extended the initial implementation of HOP. A similar rule-based rewriting system is introduced by Ancona in 1995. The goal here was to maintain the efficiency of both phases without focusing the individual algorithms too much. Code generation and optimization in lcc are combined into a single process, a rule based, pattern matching and replacement algorithm.

Other research has shown that the peephole optimization can greatly improve code given by a naive code generation. The optimizer is not intended to replace Navia's optimizer in any way as the optimizer's rule set is partial in particular. Pattern matching strategies and its surrounding information to the techniques that are in concern with the framework of peephole optimization is described in the next segment.

Optimization Rules (Pattern) Matching Strategies

The field of pattern matching is having applicability to many areas of computer science. Patterns are represented as regular expressions classically, which provide a format for expressing the sequence of characters to look for. If the string represents a valid occurrence of the pattern then a pattern successfully matches an input string and if at any point during matching, the input string does not satisfy the requirement of pattern then the pattern fails to match a string. A pattern matching is an information processing problem, in which variables are bound and constriction have to be satisfied. In object-oriented languages, an object represents a smallest unit of information, so everything is put together around objects.

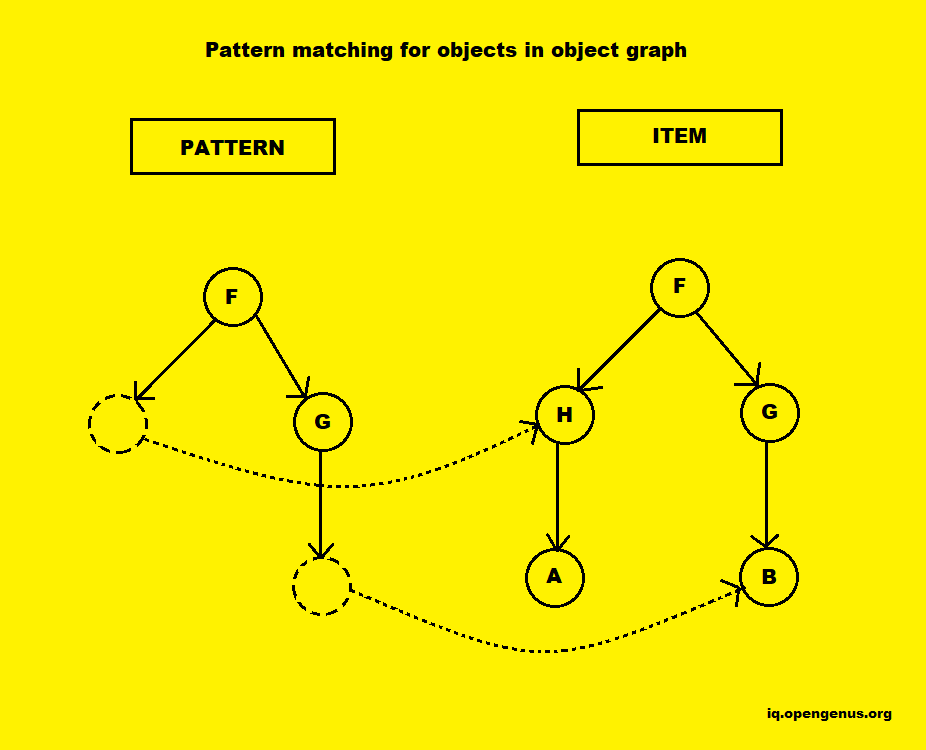

This means that for applying pattern matching with strings and regular expressions, the programmer has to work on lower level of abstraction. Visser J. described an approach to support pattern matching in mainstream object-orientated languages without language extension in 2006. In this approach a pattern is created and be passed as an argument in a form of a first class entity which provides methods invocation just like other objects. This approach is implemented by performing matching of the objects in object graph. The pattern is an object graph that contains variable objects, indicated by empty dashed circles. After matching, these variables are bound to corresponding objects in the term, indicated by the dotted arrows.

JMatch is another attempt to introduce pattern matching in Java, though by language extension. Mostly, in the case of peephole optimization, one not only wishes to identify patterns, but to replace them as well. So pattern matching is also a rewriting problem. Visser E. et al described and published Strategic pattern matching in 1999, and also introduces Stratego in 2000-2005, which a term rewriting language that provides elasticity in strategy application by separating rule definitions from strategy specifications. Hence a suitable rewriting strategy can be selected for applications with a base set of rules according to the input. Peephole optimizers have always implicitly supported this design: Usually there is a separate rules file and a peephole optimizer skeleton in classical peephole optimizers. But as for strategically aspects of rule application, only the backwards strategy has been applied so far.

Research Issues

- As per the earlier research work, size of peephole is only capable to cover a smaller set of instructions of the intermediate code for the optimization investigation and so the issue is that, can peephole size be increased that can cover bigger set of intermediate code instructions generated from the longer instruction sequences of Higher Level Language? And how it is possible?

- As per the study of different Peephole Optimizer, it is also important to choose an efficient pattern matching strategy for the replacement part. Earlier Peephole Optimizers have commonly implemented string based pattern matching approach and latter on other pattern matching strategies have been identified like tree manipulation or object based pattern matching. A question arises from the above study is that, can other pattern matching strategy be implemented rather than string based for replacement part?

- Peephole Optimizers were generally focusing on pattern matching strategies and replacement rules to map and replace redundancy from the code and so that the meaning of the code gets lost. So the issue suggests can PO be achieved even more successfully by utilizing the meaning of the code? And how?

- PO is an old technique from the 1980s, and from the observation of above study, we found that the concept of Peephole Optimization has already applied with structured or procedure oriented programming approaches to optimize object code but a question arises is that can it be combined with newer concepts & approaches like OOP with more efficiency for optimizing byte code?

- Optimization Overhead is a major issue to the work of optimizing compiler to be concerned as the number of scan performed over intermediate code until no more improvement found.

Conclusion

This article at OpenGenus discusses the Peephole Optimization approach and its application for the creation and design of optimising compilers, as well as the optimization rules that should be matched to examine and replace unnecessary intermediate code instructions. Various pattern matching techniques, such as string-based, tree-based, and object-based pattern matching of pattern rules, have also been studied. The major component of this article is broken into four sections, the first of which focuses on machine dependent peephole optimizers that are capable of working with object code instructions and are hard programmed to the specific machine and pattern rules. The second section discusses the basis of retargetable peephole optimizers that are machine agnostic and can work with register transfers rather than assembly code to avoid extensive case analysis. It also provides pattern matching algorithms that make use of the hashing technology. A few studies in this part have also examined the notion of automatic generators of optimization rules, which indicates that pattern rules can be automated and machine driven. The final section addresses the merging of code generation and optimization into a single step to improve optimization speed and efficiency. The last section presents several pattern matching algorithms that have been applied across various peephole optimizers. We may also comprehend the present state and research difficulties involving peephole optimization in the building and design of optimising compilers by studying all sections of this article.