Open-Source Internship opportunity by OpenGenus for programmers. Apply now.

Search Engine is a technology which ranks pages across the Internet according to some rule. The page it shows to a user depends on the query the user searched. Query is in the form of text. So, in order to understand this text, search engine first need to understand what the user want to search. This is very difficult as any language that is there, is not definitive. One thing can be represented in a language in many ways. This makes it very difficult to understand the query. Over the years, this capability of search engine has been increased to a huge extent. But sometimes search engine still can't get complex or conversational queries right. Users might type the query where they use “keyword-ese", entering words that they think search engine might understands, but are not actually how they would naturally write or ask a question in real-life.

This breakthrough was the result of Google research on transformers which resulted in BERT. These models work by looking across all words for a particular word. That means, to get the representation or meaning of a word, they will look for the words that in around that word. Hence, we can say that they look at the context of the word used rather than their dictionary meaning. Looking for words both before and after makes it very strong in getting the best meaning of the word present in that text.

To get the indepth understanding of BERT model, please go ahead on this link which will help you understand it in depth.

Cracking your queries

Particularly for longer queries, such as conversational type queries, or searches queries which includes prepositions such as 'to', 'on', 'for',etc matter a lot in the sentence as it drives the overall meaning of the query. Search Engine will be able to understand the hidden meaning or context of the query searched by user. Hence, any user can search in a way which he/she feels natural or the way in which he/she will ask verbally. BERT will look around for each work to get the inner or hidden meaning of the query text.

BERT in action

Example 1

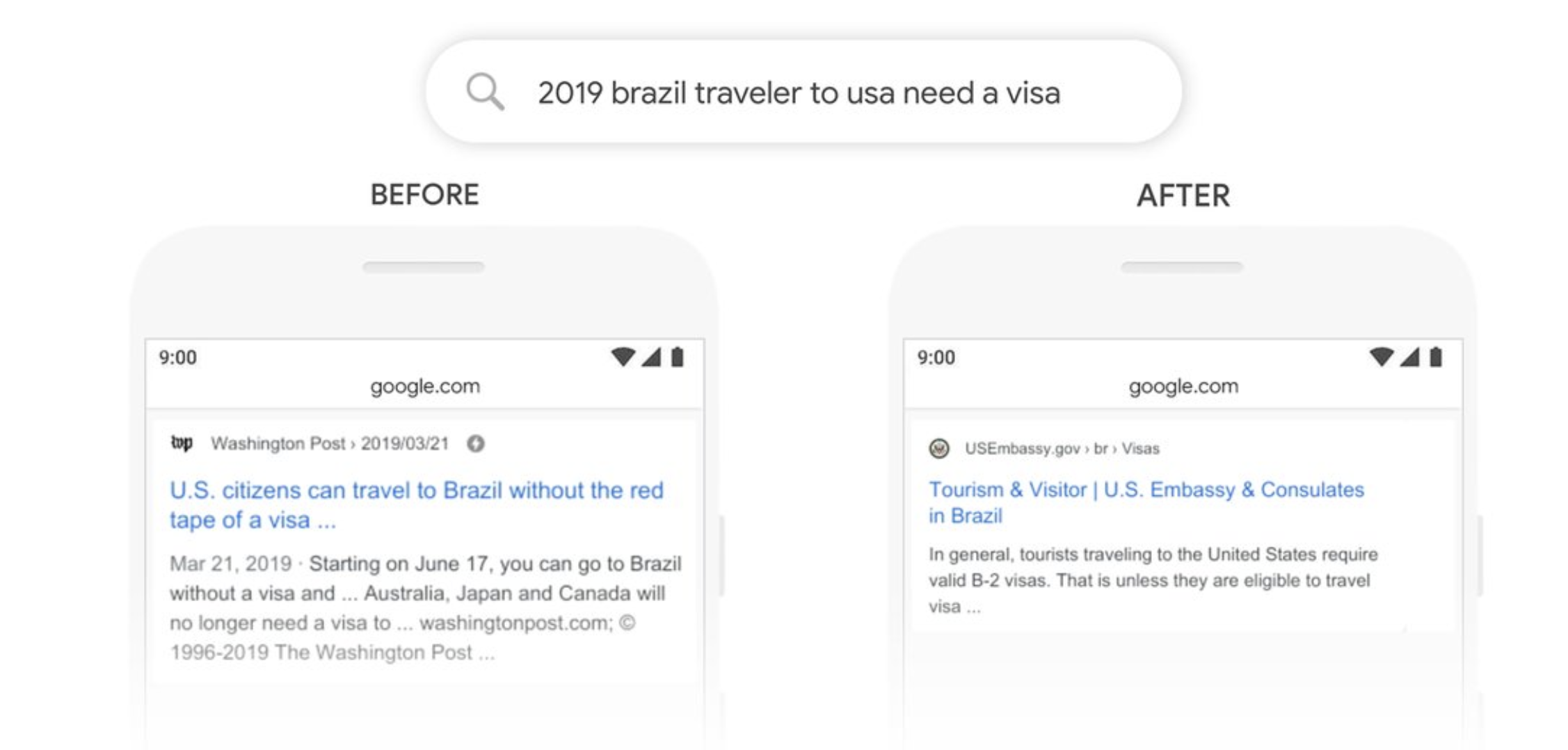

The figure should search results for query “2019 brazil traveler to USA need a visa.” For this query most important word if 'to' which is used in understanding the meaning of this query. It is about a Brazilian person traveling to the U.S., and not the other way around. Previous models wouldn't understand this important connection of 'to' in the query. That is why they would return information about U.S. citizens traveling to Brazil instead. With the help of BERT, this subtle connection is grasped in the query easily and now search engine knows that 'to' word in the query matters here a lot. Hence, it can provide us relevant result of the query which the user actually intended to search.

Example 2

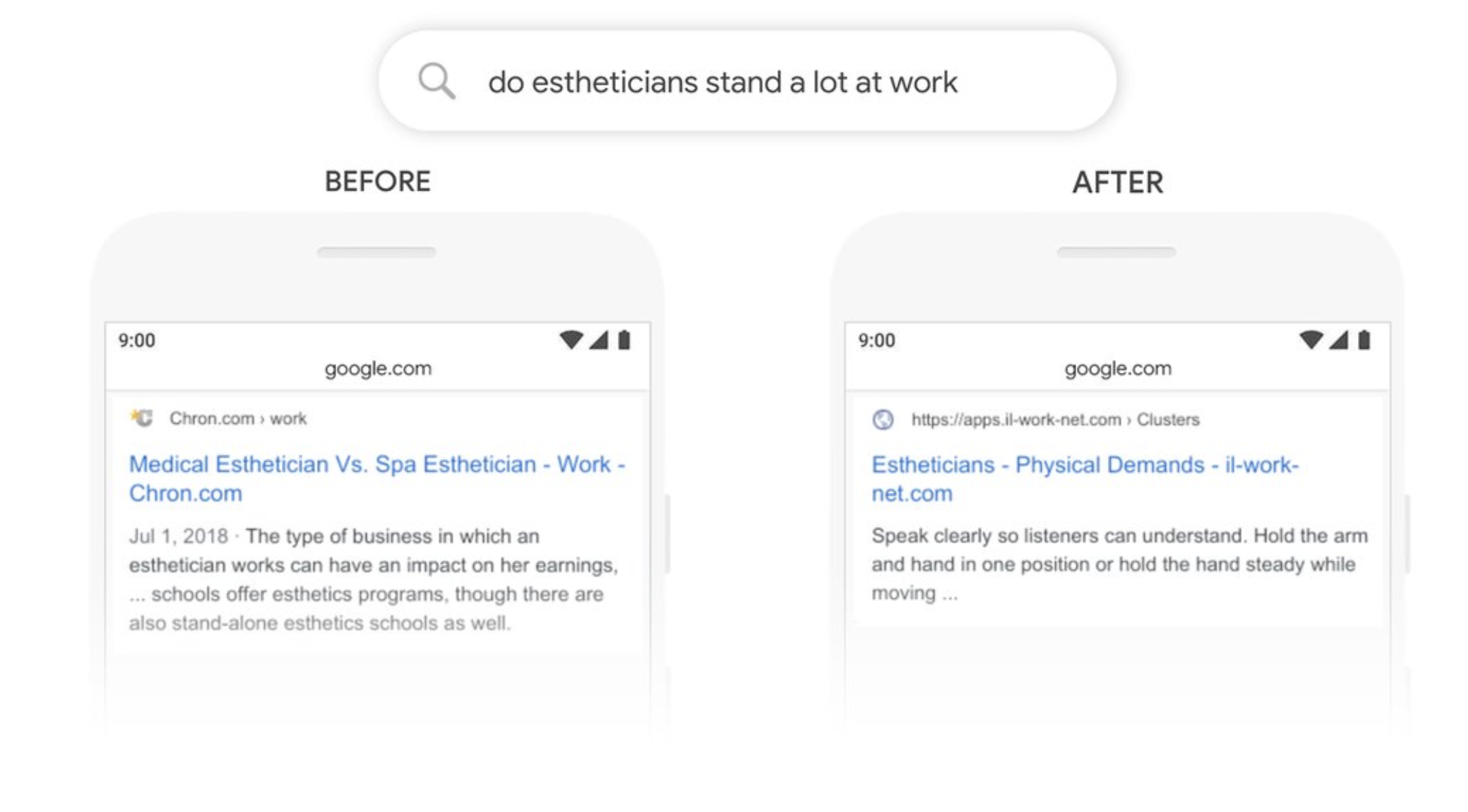

The figure should search results for query: “do estheticians stand a lot at work.” Previous models would generally take an approach of matching keywords.For Example, the search engine would match 'stand-alone' with the string 'stand' in the user's query. But as we can see this word in the given context, this is not the correct usage of this word. But if we look at the result of the BERT, we can see it understands that 'stand' word is used in the context which is related to physical demands of a job. Hence, as it gets the actual context of the word used, it is able to show us the relevant result of the query.

BERT is not only restricted to any one language. It can be used across the globe with totally different language. This is because these models learn the hidden meaning of the words and the context. They learn the underlinings of how words are used and how they combine to form meaningful sentences which results in useful meanings. Hence these models can learn these hidden meanings and use or apply these learned meaning to any other language.So one can learn these hidden meanings and structures from say, English and use these to understand say, Turkish. This is very useful as we have large amounts of text available in English but very less in Turkish. This really helps the search engine to understand the query of the user in any language.

To know more about this method, please go ahead and read this article on M-BERT.

No matter what user is looking for, rather than typing in form of keyword-ese which one think that might return the relevant results, one should always enter it in the natural form;The form in which people speak. But not everything is always work the way we want, even with BERT as well. For Example, searching “what state is south of Nebraska”, BERT returns results of a community called “South Nebraska”.

Natural Language understanding remains an difficult challenge even after release of BERT, and it keeps the research society to continue to improve Search. They are always getting better and working to find the meaning in-- and most helpful information for-- every query you send our way. OpenAI's GPT-3 is just and example what these Language Models can do when it is build with many parameters.