Open-Source Internship opportunity by OpenGenus for programmers. Apply now.

In this article, we have explored the idea of OpenPose Systems in depth which is used for Pose Detection application using Machine Learning.

Table of contents:

- Introduction to OpenPose

- Training of OpenPose

- Architecture of OpenPose

- Working

- FAQ on OpenPose

- Conclusion

Introduction to OpenPose

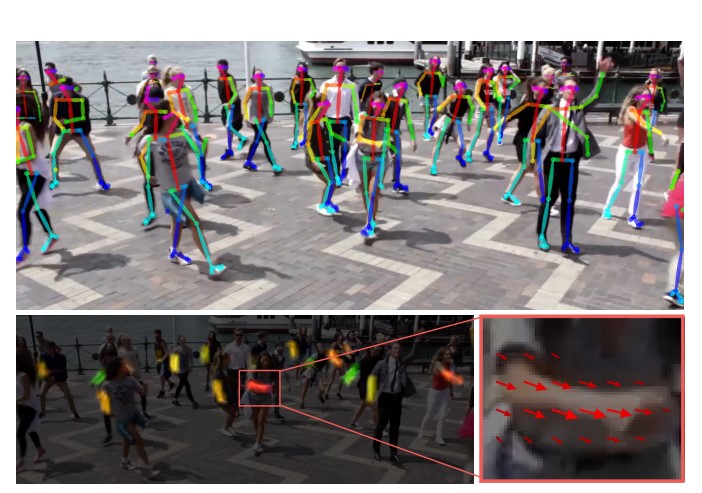

OpenPose is a pose detection library that is capable of detecting multi person pose by making use of real time information input. Openpose is capable to detect human body joints with the help of 135 keypoints.These keypoints are taken from different human body parts such as hand, feet, face and so on.

Training of OpenPose

Openpose makes use of bottom-up approach , meaning it first detects the keypoints and then associates it by detecting body parts. Bottom-up approaches are faster and more efficient when compared to that of top down approach.Also the top down approach fails to consider spatial dependencies between multiple people and hence making it a bit complicated to use for multi person pose estimation.

Openpose uses datasets like MPII that has keypoints of the human body like torso , hand , hips , shoulders, etc and on the other hand COCO that mainly contains facial keypoints. Openpose takes input in terms of realtime data i.e images, videos , streaming and generates output in the format of XML,Json,etc.

The openpose system is constantly being upgraded as the data present in the datasets are becoming vast and large in size , increasing the accuracy of these systems by a huge margin. The face mask detection , the foot detection and body part detection datasets are continuously being concatenated in order to bring this difference.

Architecture of OpenPose

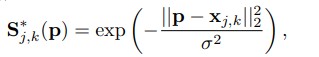

Open pose has a 2 branch architecture where the branches help generate confidence maps and the part affinity fields for part association . The above architecture shows that the first set of stages predict the part affinity meanwhile the last set of stages generate the confidence maps. Pose detection is the result of iterative PAF and confidence map generation, this happens with the help of Convolution blocks of 3 convolutions of kernel size 3X3 and the concatenation of all the features extracted through the previous stages. The 7 X 7 kernel size has been reduced to 3 X 3 for better feature detection .

Working

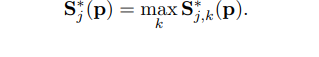

An RGB image is given to the openpose systems as the input. From this image first , the openpose extracts features using the first few layers . After feature detction the Part Affinity field is generated to associate it with body parts ,along with this the set of 18 confidence map that indicates the possibility of parts of human body at a certain position is generated.

The PAF's (Part Affinity Field) is individually a 2D vector at that contains the location of the body part as well as the direction in which the body part is turned towards.

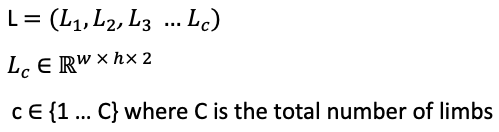

where,

Here L->Part Affinity Field

p->Image point

k->person

c->Limb

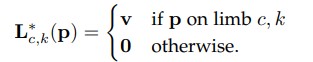

The Part Affinity Field is hence generated which denotes the association between different body parts.The association is done by bipartite matchings.

For the purpose of association each body part location is compared with that of the other and through this the alignment is checked and confidence score is given.

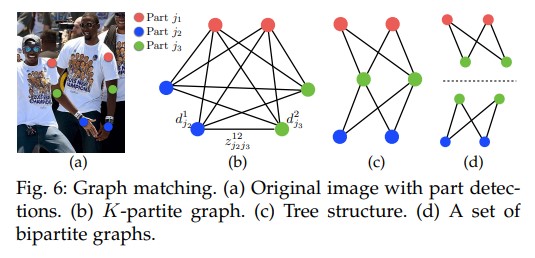

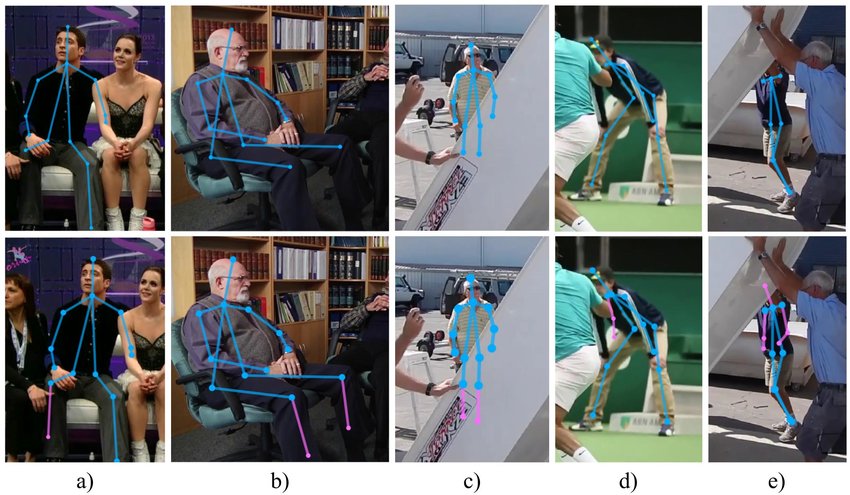

where,

Here the S*j,k(p) represents confidence maps for each individual person-k.

The confidence map is generated by the network by using max operator on groundtruth confidence map at position p. Whereas j represents the body part.

Confidence maps also gives heatmaps where as the part affinity fields give the direction of the body part.Essentially , these both tell the machine that the body parts are in pairs or not and if they are then what is the pose of the human body.

The confidence maps and the PAF are generated after a prolonged iterative process of correction of loss function in CNN , during this exhaustive process all the features are constantly concatenated with that of the original input by greedy inference and hence the pose is estimated. The presence of multiple stages and iterative processes help in increasing the depth of the neural network and helps reduce the localization errors.

FAQ on OpenPose

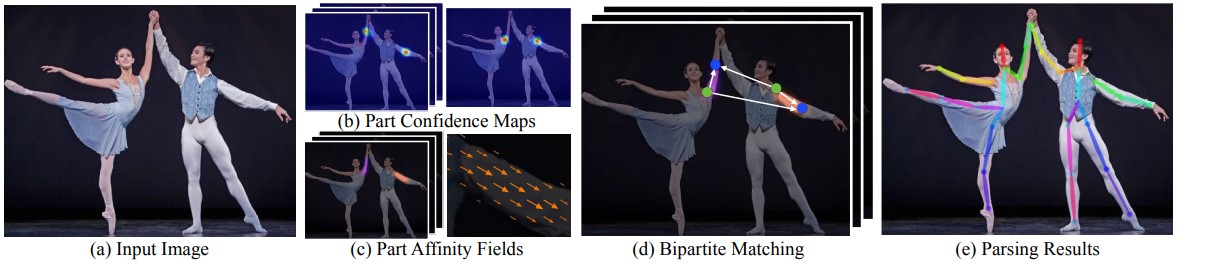

- What are the problems faced in multi person detection?

Soln: The presence of multiple people in an image is the first issue as the machine does not know the accurate number of people present. Secondly , the different pose of humans that they are in makes it more diificult at the same time interactions between people make it tougher , as they could be in contact , or occlusion which might make part detection and association hard.

2.What is bottom-up approach?

Soln: In bottom-up approach , the system first detects all the keypoints and then associates it with the body and forms boundary regions and then the pose is detected.

3.What are the advantages of Openpose?

Soln: Since openpose is a realtime pose detector, it has more applications compared to other libraries.Openpose is also capable to detect multiple humans at the same time.Assisted living is a major application that openpose can work in.Also in fields of gaming,ml and ai it is quite helpful.

Conclusion

Openpose is a multi-person realtime human pose detection system that is quite fast compared to other libraries and it is quite necessary for the machine to visually detect and interpret humans.This is an ongoing field of research in which many companies have invested to optimize these libraries , also OpenPose has been included in the OpenCV library with this regard.

With this article at OpenGenus, you must have the complete idea of OpenPose System.