Open-Source Internship opportunity by OpenGenus for programmers. Apply now.

In this article, we have explained the time complexity of Counting Sort for Average case, Worst case and Best case and also, covered the space complexity using Mathematical analysis.

Table of contents:

- Introduction to Counting Sort

- Time Complexity analysis

- Worst case time complexity

- Best case time complexity

- Average case time complexity

- Space Complexity

- Conclusion on time and space complexity

- Comparison with other sorting algorithms

In short,

- Time complexity: O(N+K)

- Space Complexity: O(K)

- Worst case: when data is skewed and range is large

- Best Case: When all elements are same

- Average Case: O(N+K) (N & K equally dominant)

where:

- N is the number of elements

- K is the range of elements (K = largest element - smallest element)

Introduction to Counting Sort

Counting Sort is a sorting algorithm that can be used for sorting elements within a specific range and is based on the frequency/count of each element to be sorted.

It is a integer based, out-place and a stable sorting algorithm.

In this article we shall discuss about the time and space complexity of counting sort algorithm.

Algorithm:

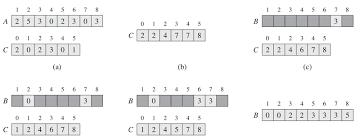

Let A be the given array with n elements, B be the output array (containing sorted elements of A) and k is the max element of A.

COUNTING-SORT(A,B,k)

step 1 : let C [0...k] be a new array

step 2 : for i = 0 to k :

C[i] = 0

step 3 : for j = 1 to A.length :

C[A[j]] =C[A[j]] + 1

// C[i] now contains the number of elements equal to i.

step 4 : for i = 1 to k :

C[i] = C[i] + C[i - 1]

// C[i] now contains the number of elements less than or equal to i.

step 5 : for j = A.length down to 1 :

B[C[A[j]]] = A[j]

C[A[j]] = C[A[j]] - 1

In step 1 we initialize an auxiliary array C of size k . After the for loop of step 2 initializes the array C to all zeros, the for loop of step 3 inspects each input element. If the value of an input element is i, we increment C[i]. Thus, after step 3, C[i] holds the number of input elements equal to i for each integer i = 0, 1, ..., k. Step 4 determine for each i = 0, 1, ..., k how many input elements are less than or equal to i by keeping a running sum of the array C. Finally for loop of step 5 places each element A[j] in its correct sorted position in the output array B.

For a given array A = [2, 5, 3, 0, 2, 3, 0, 3],

Time Complexity analysis

Let us now analyse the time complexity of the above algorithm:

- step 1 takes constant time.

- In step 2, for loop is executed for k times and hence it takes O(k) time.

- In step 3, for loop is executed for n times and hence it takes O(n) time.

- In step 4, for loop is executed for k times and hence it takes O(k) time.

- In step 5, for loop is executed for n times and hence it takes O(n) time.

Thus the overall time complexity is O(n+k)

where:

- N is the number of elements

- K is the range of elements (K = largest element - smallest element)

The basic intution behind this can be that, as counting the occurrence of each element in the input range takes k time and then finding the correct index value of each element in the sorted output array takes n time, thus the total time complexity becomes O(n+k).

Counting sort algorithm is a non comparison based sorting algorithm i.e the arrangement of elements in the array does not affect the flow of algorithm. No matter if the elements in the array are already sorted, reverse sorted or randomly sorted, the algorithm works the same for all these cases and thus the time complexity for all such cases is same i.e O(n+k).

Worst case time complexity

Worst case time complexity is when the data is skewed that is the largest element is significantly large than other elements. This increases the range K.

As the time complexity of algorithm is O(n+k) then, for example, when k is of the order O(n^2), it makes the time complexity O(n+(n^2)), which essentially reduces to O( n^2 ) and if k is of the order O(n^3), it makes the time complexity O(n+(n^3)), which essentially reduces to O( n^3 ). Hence, in this case, the time complexity got worse making it O(k) for such larger values of k. And this is not the end. It can get even worse for further larger values of k.

Thus the worst case time complexity of counting sort occurs when the range k of the elements is significantly larger than the other elements.

Best case time complexity

The best case time complexity occurs when all elements are of the same range that is when k is equal to 1.

In this case, counting the occurrence of each element in the input range takes constant time and then finding the correct index value of each element in the sorted output array takes n time, thus the total time complexity reduces to O(1 + n) i.e O(n) which is linear.

Average case time complexity

To compute the average case time complexity, first we fix N and take different values of k from 1 to infinity, in this case k computes to be (k+1/2) and the average case will be N+(K+1)/2. But as K tends to infinity, K is the dominant factor.

Similarly,now if we vary N, we see that both N and K are equally dominant and hence, we have O(N+K) as average case.

Conclusion on time complexity:

The time complexity of counting sort algorithm is O(n+k) where n is the number of elements in the array and k is the range of the elements.

Counting sort is most efficient if the range of input values is not greater than the number of values to be sorted. In that scenario, the complexity of counting sort is much closer to O(n), making it a linear sorting algorithm.

Space Complexity

In the above algorithm we have used an auxiliary array C of size k, where k is the max element of the given array. Therefore the space complexity of Counting Sort algorithm is O(k).

Space Complexity : O(k)

Larger the range of elements in the given array, larger is the space complexity, hence space complexity of counting sort is bad if the range of integers are very large as the auxiliary array of that size has to be made.

Conclusion on time and space complexity

- Time complexity: O(N+K)

- Space Complexity: O(K)

- Worst case: when data is skewed and range is large

- Best Case: When all elements are same

- Average Case: O(N+K) (N & K equally dominant)

where:

- N is the number of elements

- K is the range of elements (K = largest element - smallest element)

So here we conclude that:

- Counting sort algorithm work best if k is not significantly larger than n. In this case the complexity becomes close to O(n) or linear.

- Also larger the range of elements in the given array, larger is the space complexity.

Comparison with other sorting algorithms

-

While any comparison based sorting algorithm requires O(n (log n)) comparisons, counting sort has a running time of O(n), when the length of the input list is not much smaller than the largest key value, k, in the input array.

-

Counting sort is very efficient in the cases where range is comparable to number of input elements as it performs sorting in linear time and can be a advantage in such cases over other sorting algorithms such as quick sort. When in the worst case quick sort takes O(n^2) time, counting sort only takes O(n) time provided that the range of elements is not very large.

-

Most sorting algorithms perform in quadratic time (O(n^2)), and the two exceptions — heap and merge sort in time (O(n log n)). Counting sort is the only sorting algorithm which performs in linear time (for small range of elements).

-

There is no comparison between any elements, so it is better than comparison based sorting techniques.

-

Since counting sort is suitable for sorting numbers that belong to a well-defined, finite and small range, it can be used as a subprogram in other sorting algorithms like radix sort which can be used for sorting numbers having a large range.

Question

How many comparisons will be made to sort the array arr={1,5,9,3} using counting sort?

With this article at OpenGenus, you must have the complete idea of Counting Sort. Enjoy.