Open-Source Internship opportunity by OpenGenus for programmers. Apply now.

Linear regression is a simple Supervised Learning algorithm that is used to predict the value of a dependent variable(y) for a given value of the independent variable(x) by effectively modelling a linear relationship(of the form: y = mx + c) between the input(x) and output(y) variables using the given dataset.

Linear regression has several applications :

- Prediction of housing prices.

- Observational Astronomy

- Finance

In this article we will be discussing the advantages and disadvantages of linear regression.

Advantages of Linear Regression

Simple implementation

Linear Regression is a very simple algorithm that can be implemented very easily to give satisfactory results.Furthermore, these models can be trained easily and efficiently even on systems with relatively low computational power when compared to other complex algorithms.Linear regression has a considerably lower time complexity when compared to some of the other machine learning algorithms.The mathematical equations of Linear regression are also fairly easy to understand and interpret.Hence Linear regression is very easy to master.

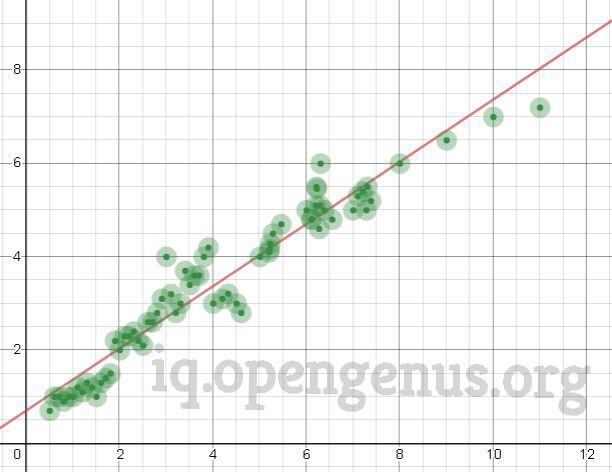

Performance on linearly seperable datasets

Linear regression fits linearly seperable datasets almost perfectly and is often used to find the nature of the relationship between variables.

Overfitting can be reduced by regularization

Overfitting is a situation that arises when a machine learning model fits a dataset very closely and hence captures the noisy data as well.This negatively impacts the performance of model and reduces its accuracy on the test set.

Regularization is a technique that can be easily implemented and is capable of effectively reducing the complexity of a function so as to reduce the risk of overfitting.

Disadvantages of Linear Regression

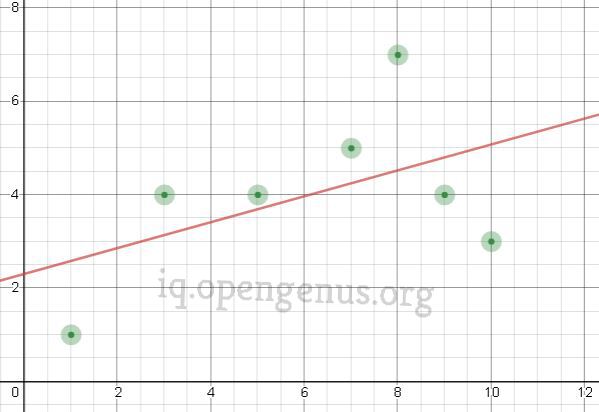

Prone to underfitting

Underfitting : A sitiuation that arises when a machine learning model fails to capture the data properly.This typically occurs when the hypothesis function cannot fit the data well.

Example:

Since linear regression assumes a linear relationship between the input and output varaibles, it fails to fit complex datasets properly. In most real life scenarios the relationship between the variables of the dataset isn't linear and hence a straight line doesn't fit the data properly. In such situations a more complex function can capture the data more effectively.Because of this most linear regression models have low accuracy.

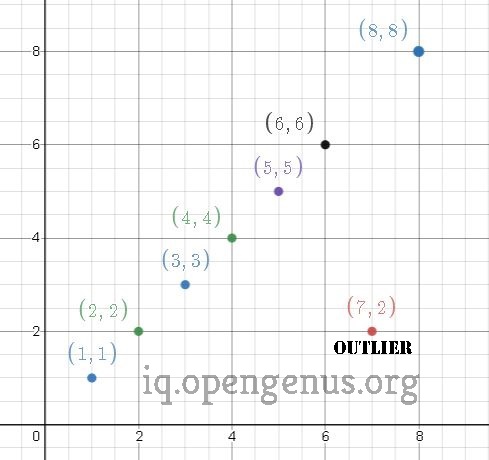

Sensitive to outliers

Outliers of a data set are anomalies or extreme values that deviate from the other data points of the distribution.Data outliers can damage the performance

of a machine learning model drastically and can often lead to models with low

accuracy.

Example :

Outliers can have a very big impact on linear regression's performance and hence they must be dealt with appropriately before linear regression is applied on the dataset.

Linear Regression assumes that the data is independent

Very often the inputs aren't independent of each other and hence any multicollinearity must be removed before applying linear regression.

Question

In which of the following cases would it be a good idea to use linear regression?

Conclusion

While the results produced by linear regression may seem impressive on linearly seperable datasets, it isn't recommended for most real world applications as it produces overly simplified results by assuming a linear relationship between the data.

With this article at OpenGenus, we must have the complete idea of advantages and disadvantages of Linear Regression. Enjoy.