Open-Source Internship opportunity by OpenGenus for programmers. Apply now.

Reading time: 30 minutes

Did you know that everytime we upload an image to a site like facebook they use facial recognition to recognise faces in it ? Certain governments around the world also use face recognition to identify and catch criminals. And today we can unlock our phone with face unlock!

Introduction

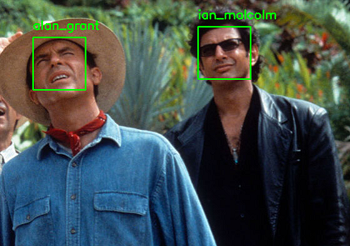

Facial recognition is a technology used for identifying or verifying a person from an image or a video. There are various methods by which facial recognition systems work, but in general, they work by comparing selected facial features from a given image with faces within a database.

It is also described as a Biometric Artificial Intelligence based application that can uniquely identify a person by analysing patterns based on the person's facial textures and shape.

Basic operations

These are the basic operations involved in Face Recognition :

-

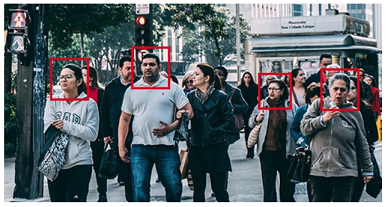

Face Detection : Its the first and most essential step in face recognition.

-

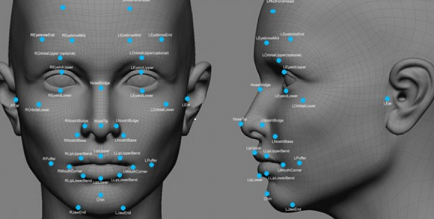

Features Segmentation : Its a simultaneous process, sometimes face detection suit comparatively difficult and requires 3D Head Pose, facial expression, face relighting, Gender, age and lots of other features.

-

Face Recognition : It is less reliable and the accuracy rate is still not up to the mark. Extensive work on Face Recognition have been done, but still it is not up to the mark for implementation point of view .

More techniques are being invented each year to get better and realistic results.

Face detection techniques

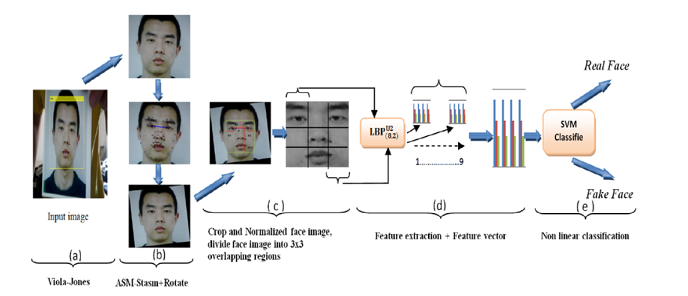

Viola-Jones Algorithm

- Its an efficient algorithm for face detection .

- Developers of this algo showed faces being detected in real time on a webcam feed.

- It was the most stunning demonstration of computer vision and its potential at the time.

- Soon, it was implemented in OpenCV & face detection became synonymous with Viola and Jones algorithm.

BASIC IDEA:

- It takes a bunch of faces as data.

- We hard-code the features of a face.

- Train a SVM(Classifier) on the feature set of the faces.

- Use this Classifier to detect faces!

DISADVANTAGES : It was unable to detect faces in other orientation or configurations (tilted,upside down,wearing a mask,etc.)

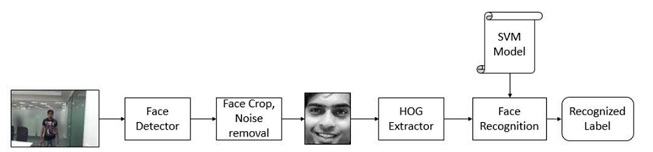

Histogram Of Oriented Gradients

BASIC IDEA :

- For an Image I , analyze each pixel say P(i) of the image I for the relative dark pixels directly surounding it.

- Then add an arrow pointing in the direction of the flow of darkness relative to P(i) .

- This process of assigning an oriented gradient to a pixel P(i) by analyzing it's surrounding pixels is performed for every pixel in the image.

- Assuming HOG(I) as a function that takes an input as an Image I, what it does is replaces every pixel with an arrow. Arrows = Gradients. Gradients show the flow from light to dark across an entire image.

- Complex features like eyes may give too many gradients, so we need to aggregate the whole HOG(I) in order to make a 'global representation' . We break up the image into squares of 16 x 16 and assign an aggregate gradient G′ to each square , where the function could be max(),min(),etc.

Disadvantage : Despite being good in many applications, it still uses hand coded features which failed with much noise and distractions in the background.

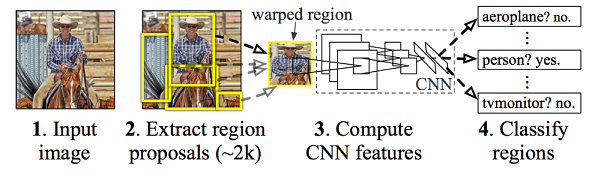

R-CNN

BASIC IDEA :

- R-CNN creates bounding boxes, or regions , using selective search.

- Selective search looks at the image through windows of different sizes, and for each size it tries to group together adjacent pixels by texture, color, or intensity to identify objects.

- Generate a set of regions for bounding boxes.

- Run the images in the bounding boxes through a pre-trained neural network and finally an SVM to see what object the image in the box is.

- Run the box through a linear regression model to output tighter coordinates for the box once the object has been classified.

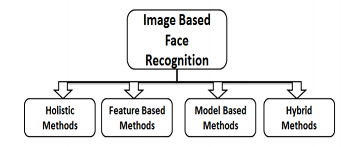

Face recognition techniques

Face recognition is a challenging yet interesting problem that it has attracted researchers who have different backgrounds like psychology, pattern recognition, neural networks, computer vision, and computer graphics.

The following methods are used to face recognition :

- Holistic Matching

- Feature Based (structural)

- Model Based

- Hybrid Methods

Holistic Matching

In this approach, complete face region is taken into account as input data into face catching system. One of the best example of holistic methods are Eigenfaces, PCA, Linear Discriminant Analysis and independent component analysis etc.

Let's see the steps of Eigenfaces Method :

This approach covers face recognition as a two-dimensional recognition problem.

-

Insert a set of images into a database, these images are named as the training set because they will be used when we compare images and create the eigenfaces.

-

Eigenfaces are made by extracting characteristic features from the faces. The input images are normalized to line up the eyes and mouths. Then they are resized so that they have the same size. Eigenfaces can now be extracted from the image data by using a mathematical tool called PCA.

-

Now each image will be represented as a vector of weights. System is now ready to accept queries. The weight of the incoming unknown image is found and then compared to the weights of already present images in the system.

-

If the input image's weight is over a given threshold it is considered to be unidentified. The identification of the input image is done by finding the image in the database whose weights are the closest to the weights of the input image.

-

The image in the database with the closest weight will be returned as a hit to the user.

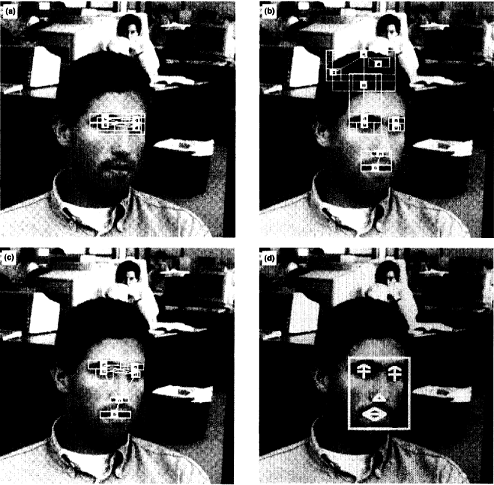

Feature-based

Here local features such as eyes, nose, mouth are first of all extracted and their locations , geomety and appearance are fed into a structural classifier. A challenge for feature extraction methods is feature "restoration", this is when the system tries to retrieve features that are invisible due to large variations, e.g. head Pose while matching a frontal image with a profile image.

Different extraction methods:

- Generic methods based on edges, lines, and curves

- Feature-template-based methods

- Structural matching methods

Model Based

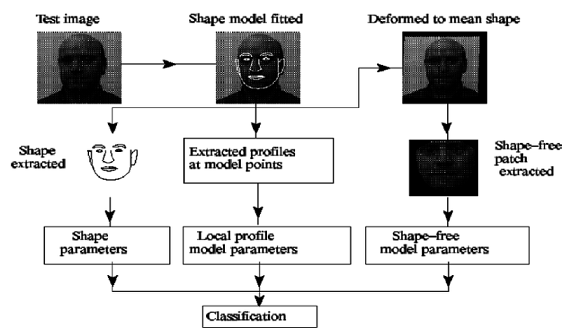

The model-based approach tries to model a face. The new sample is introduced to the model and the parameters of the model are used to recognise the image.Model-based method can be classified as 2D or 3D .

Hybrid Methods

This uses a combination of both holistic and feature extraction methods. Generally 3D Images are used in these methods. The image of a face is caught in 3D, to note the curves of the eye sockets, or the shapes of the chin or forehead. Even a face in profile would serve because the system uses depth, and an axis of measurement, which gives it enough information to construct a full face. The 3D system includes Detection, Position, Measurement, Representation and Matching.

-

Detection - Capturing a face by scanning a photograph or photographing a person's face in real time.

-

Position - Determining the location, size and angle of the head. Measurement - Assigning measurements to each curve of the face to make a template .

-

Representation - Converting the template into a numerical representation of the face .

-

Matching - Comparing the received data with faces in the database. The 3D image which is to be compared with an existing 3D image, needs to have no alterations.

Recent research works are based on the hybrid approach.