Open-Source Internship opportunity by OpenGenus for programmers. Apply now.

Reading time: 30 minutes

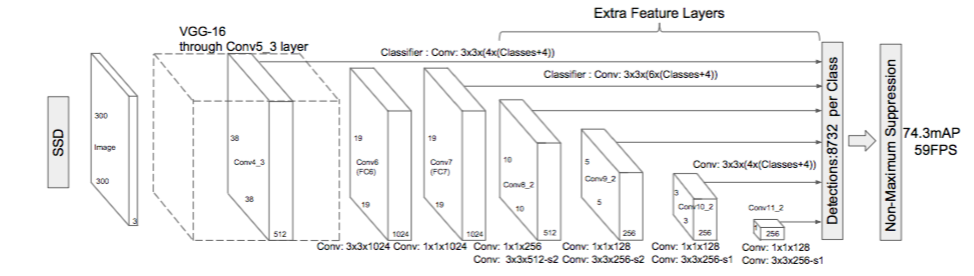

Single Shot MultiBox Detector (SSD) is an object detection algorithm that is a modification of the VGG16 architecture. It was released at the end of November 2016 and reached new records in terms of performance and precision for object detection tasks, scoring over 74% mAP (mean Average Precision) at 59 frames per second on standard datasets such as PascalVOC and COCO.

Architecture

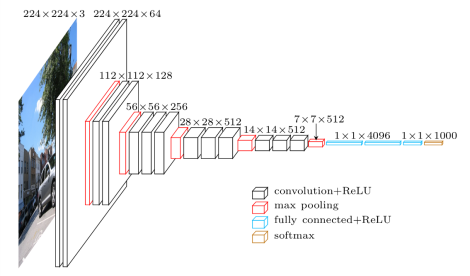

SSD’s architecture builds on the venerable VGG-16 architecture, but discards the fully connected layers.

The reason VGG-16 was used as the base network is because of its:

- strong performance in high quality image classification tasks

- popularity for problems where transfer learning helps in improving results

Instead of the original VGG fully connected layers, a set of auxiliary convolutional layers (from conv6 onwards) were added, thus enabling to extract features at multiple scales and progressively decrease the size of the input to each subsequent layer.

Multibox

MultiBox’s loss function also combined two critical components that made their way into SSD:

1.Confidence Loss

This measures how confident the network is of the objectness of the computed bounding box. Categorical cross-entropy is used to compute this loss.

2.Location Loss

This measures how far away the network’s predicted bounding boxes are from the ground truth ones from the training set. L2-Norm is used here.

multibox_loss = confidence_loss + alpha * location_loss

The alpha term helps us in balancing the contribution of the location loss

Training & Running SSD

Datasets

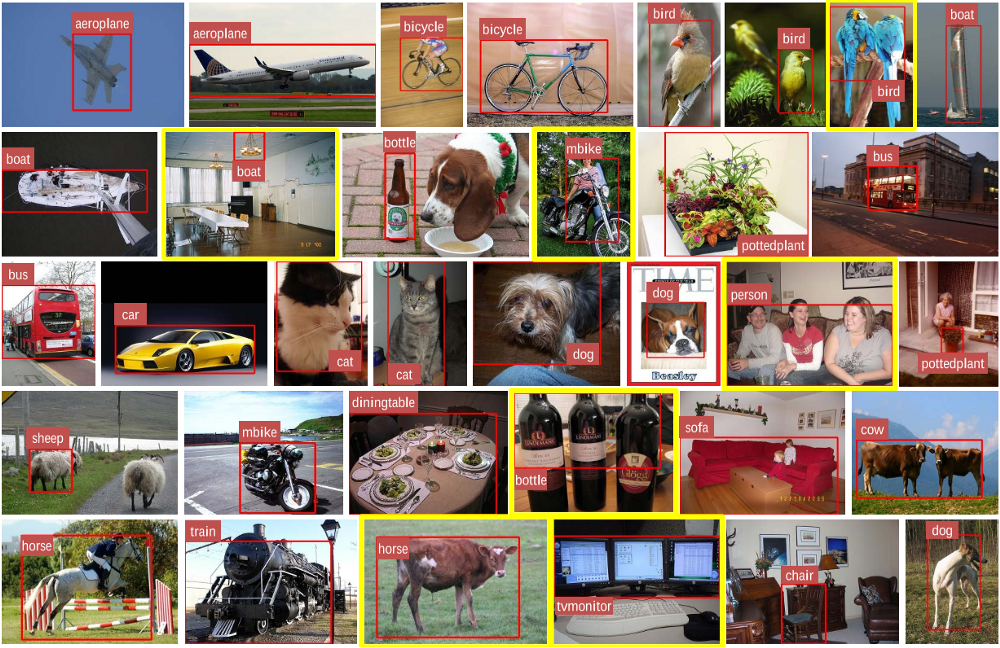

You will need training and test datasets with ground truth bounding boxes and assigned class labels (only one per bounding box). The Pascal VOC and COCO datasets are a good starting point.

Playing With SSD

Veichle Detection

Code for SSD using PyTorch

Performance

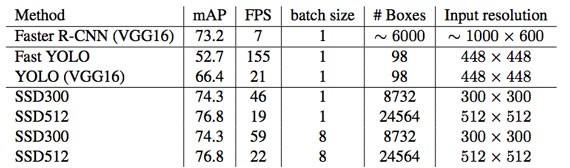

Performance Of SSD

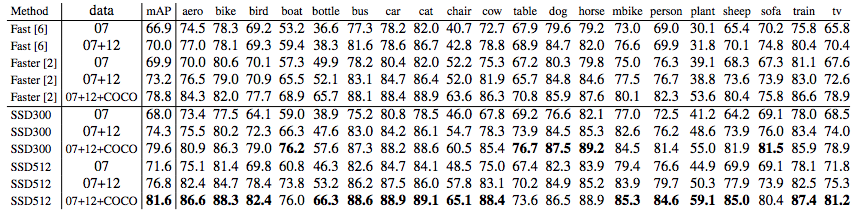

The model is trained using SGD with initial learning rate 0.001 , 0.9 momentum, 0.0005 weight decay, and batch size 32. Using a Nvidia Titan X on VOC2007 test, SSD achieves 59 FPS with mAP 74.3% on VOC2007 test, vs. Faster R-CNN 7 FPS with mAP 73.2% or YOLO 45 FPS with mAP 63.4%.

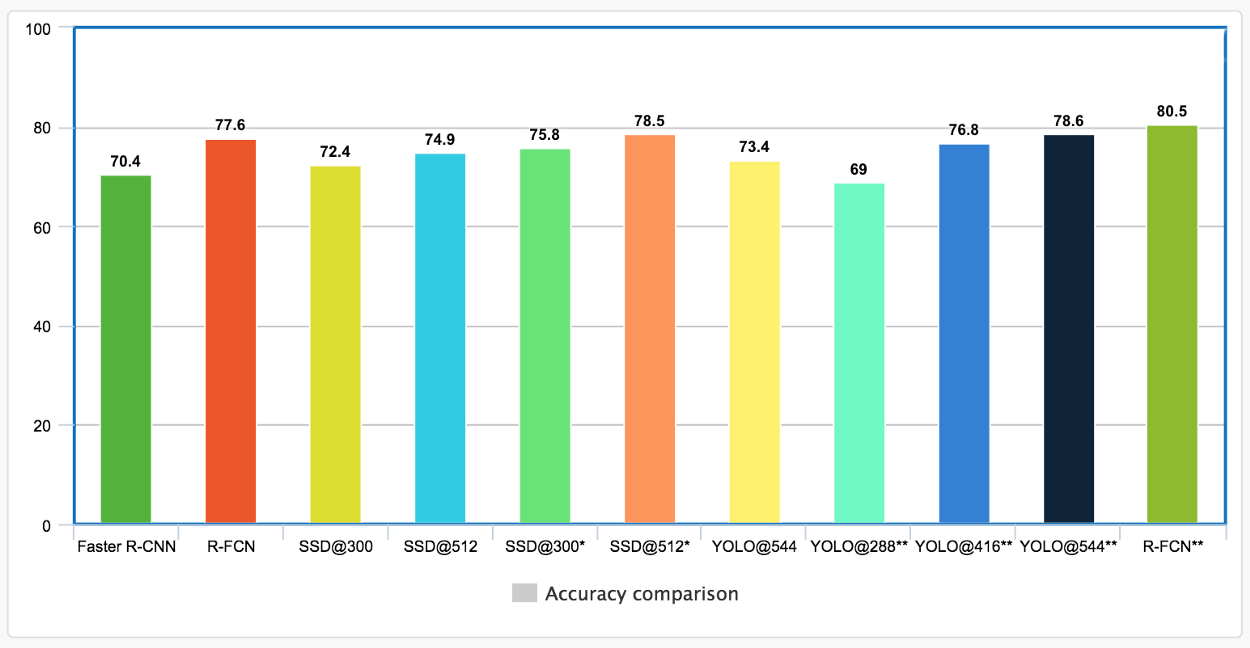

Here is the accuracy comparison for different methods. For SSD, it uses image size of 300 × 300 or 512 × 512.

Results

There are two Models: SSD300 and SSD512.

- SSD300: 300×300 input image, lower resolution, faster.

- SSD512: 512×512 input image, higher resolution, more accurate.

Accuracy Comparision

VGG

It is A CNN Architecture Used in SSD. It consists of 16 Layers. Before You are starting to learn about SSD Algorithm,you need to understand VGG Architecture to understand its Model.