Open-Source Internship opportunity by OpenGenus for programmers. Apply now.

Reading time: 10 minutes

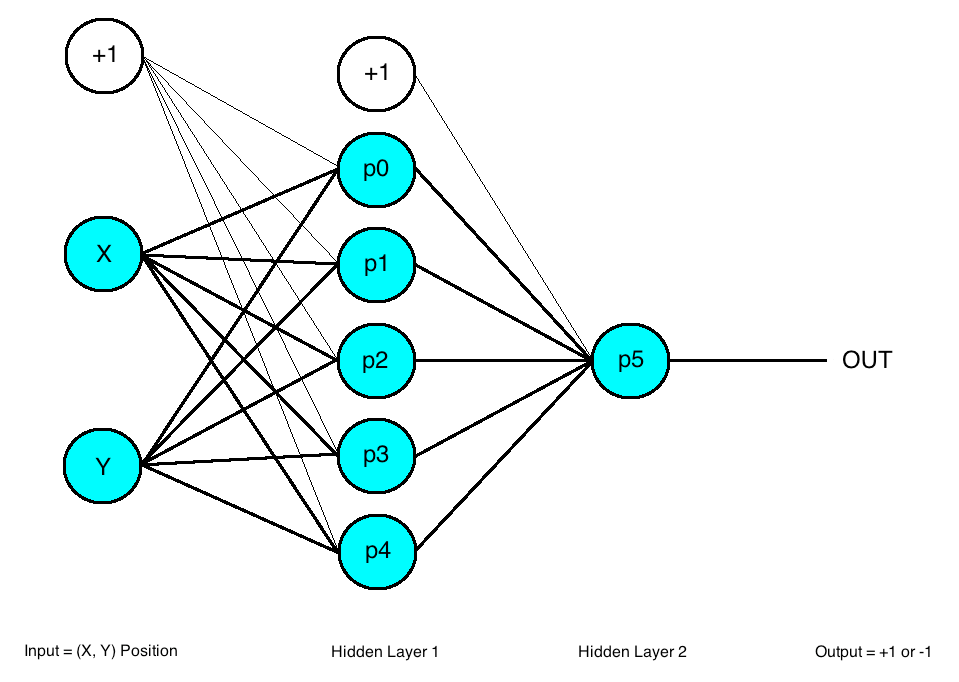

Multilayer Perceptrons (MLPs) are the buiding blocks of neural network. They are comprised of one or more layers of neurons. Data is fed to the input layer, there may be one or more hidden layers providing levels of abstraction, and predictions are made on the output layer, also called the visible layer.

MLPs are suitable for:

-

classification prediction problems where inputs are assigned a class or label.

-

regression prediction problems where a real-valued quantity is predicted given a set of inputs.

-

Tabular datasets

They are very flexible and can be used generally to learn a mapping from inputs to outputs. This flexibility allows them to be applied to other types of data. For example:

-

The pixels of an image can be reduced down to one long row of data and fed into a MLP

-

The words of a document can also be reduced to one long row of data and fed to a MLP

-

The lag observations for a time series prediction problem can be reduced to a long row of data and fed to a MLP.

As such, if your data is in a form other than a tabular dataset, such as an image, document, or time series, I would recommend at least testing an MLP on your problem. The results can be used as a baseline point of comparison to confirm that other models that may appear better suited add value.

You may use Multi-layer perceptron on the following data:

- Image data

- Text Data

- Time series data