Open-Source Internship opportunity by OpenGenus for programmers. Apply now.

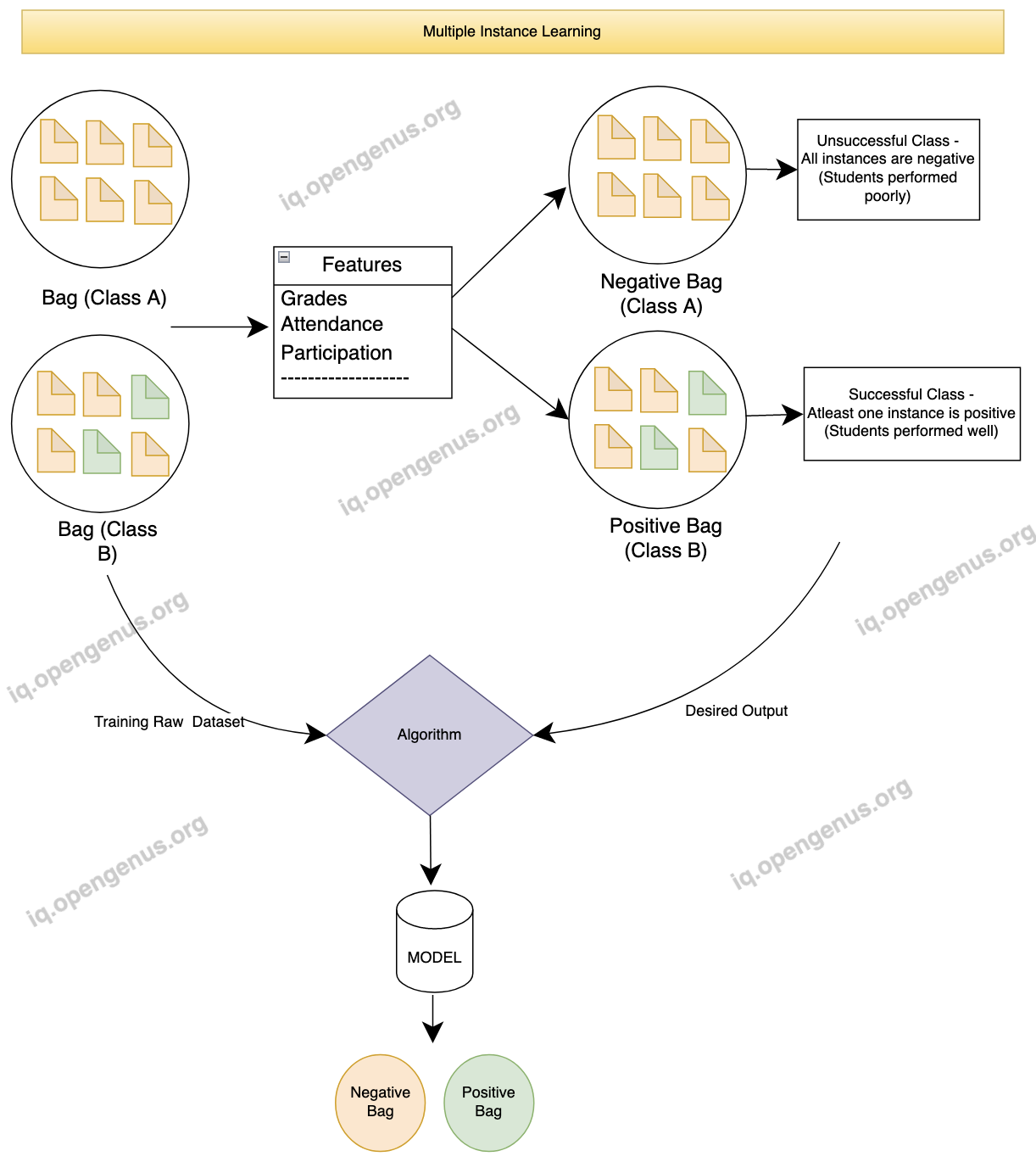

In the field of machine learning, Multiple Instance Learning (MIL) is a paradigm that expands upon traditional supervised learning. MIL differs from conventional supervised learning, where each training instance is individually labeled. Instead, MIL operates on sets of instances called bags, with each bag associated with a single label. The objective is to develop a model that can effectively classify unseen bags based on their content. MIL has attracted considerable attention due to its capacity to utilize weakly labeled data and its suitability for addressing diverse real-world problems.

Introduction

Multiple Instance Learning (MIL) is a form of supervised learning where the training data is organized into groups called "bags," rather than individual instances. Each bag contains multiple instances, and the labels are assigned to the bags instead of individual instances. This makes MIL suitable for scenarios where the labels are uncertain or unavailable at the instance level, but can be determined for the bags as a whole.

Important terminology:

1. Traditional Supervised Learning:

Traditional supervised learning is a simple machine learning approach that takes input labeled datasets to train algorithms to accurately classify data or predict outcomes.

2. Multi-instance Learning:

Multi-instance learning (MIL) is a form of weakly supervised learning where training instances are organized into sets, called bags. Unlike traditional supervised learning, labels are provided for entire bags rather than individual instances within them. This approach is useful for problems that involve weakly labeled data and can naturally fit various scenarios

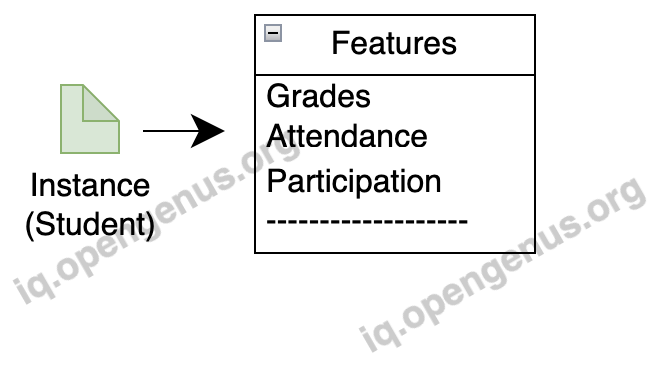

3.Instances

Each instance is associated with a feature vector that describes its characteristics and its labeled individually.

In our student and class example, the features can include attendance, grades, participation, etc. The labels, indicating whether a class performed well or not, are assigned to the bags based on the collective performance of the students within that class.

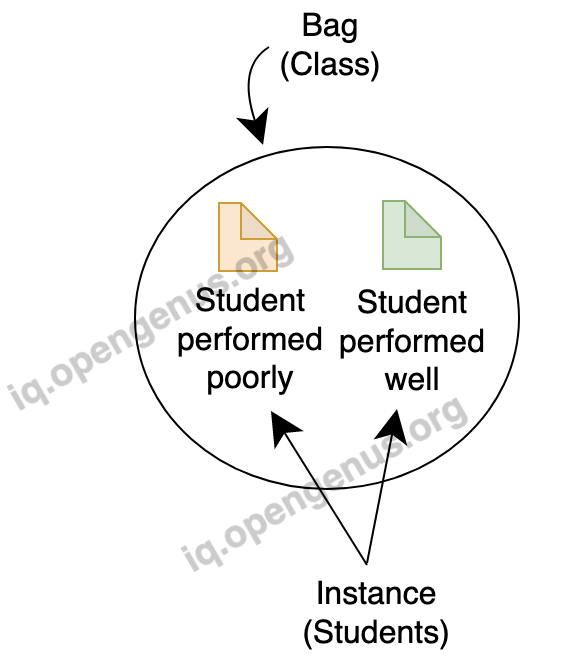

4.Bags

A bag represents a collection of instances, preferably used in multiple instance learning. For example, let's consider a scenario where we want to predict whether a class of students has performed well or not. Here, each bag represents a class, and the instances within the bag are the individual students.

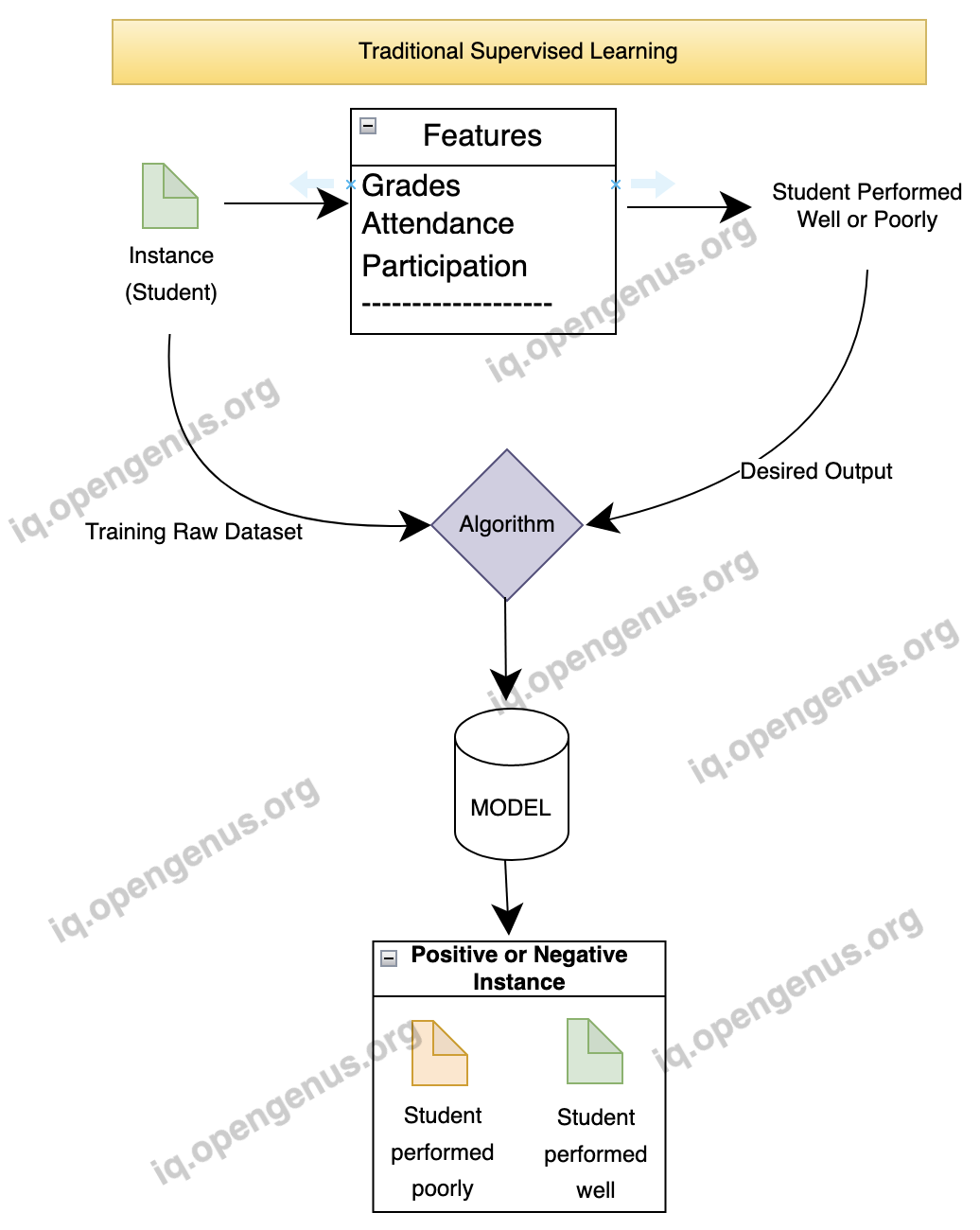

First Case: Supervised Learning:

In traditional supervised learning, each instance (student) is labeled individually, and the learning algorithm directly operates on the labeled instances. The steps involved are as follows:

1.Instance-Level Labels:

Each instance (student) is labeled individually based on their performance. For example, a student may be labeled as "good" or "poor" based on their grades.

2.Feature Extraction:

Features are extracted from each instance (student) individually, such as average grade, attendance rate, participation level, etc.

3.Algorithm - Training a Classifier:

A learning algorithm, such as logistic regression or decision trees, is trained using the labeled instances (students) and their corresponding features.The goal is to learn a model that can accurately predict the label of a new, unseen instance based on its features.

4.Inference - Classifying New Instances:

Once the model is trained, it can be used to predict the label of new, unseen instances (students) by examining their features. For example, given the features of a student, the model can predict whether the student is "good" or "poor" based on the learned patterns from the training stage.

Second Case: Multiple Instance Learning:

1.Instance-Bag Representation:

In this scenario, instances represent students.Each student is described by a set of features, such as grades, attendance, and participation.Bags represent classes. Each bag consists of multiple instances/students.

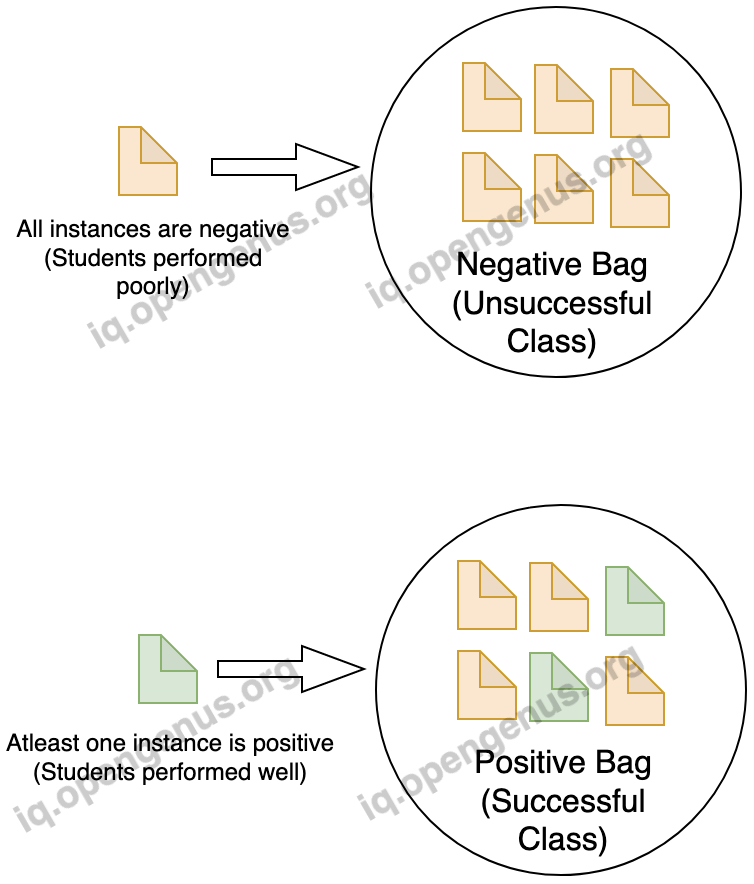

2.Bag-Level Labels:

Instead of labeling instances individually, each bag (class) is labeled as positive or negative based on the presence or absence of positive instances (students) within the bag. For example, a bag is labeled positive if at least one student in the class performs well, and negative if all students perform poorly.

3.Feature Extraction:

Features are extracted from the instances (students) within each bag (class), as before.

4.Algorithm - Bag-Level Classification:

A learning algorithm is applied to train a classifier using the extracted features and the bag-level labels. The goal is to learn a model that can accurately classify the bags (classes) as positive or negative based on the information from the instances within them.

5.Inference - Classifying New Bags:

The trained model can be used to classify new, unseen bags (classes) by examining the instances (students) within each bag. The model predicts whether the bag is positive or negative based on the learned patterns from the training stage.

Comparison:

1.In traditional supervised learning, each instance (student) is labeled individually, and the learning algorithm operates directly on the labeled instances. However, in multiple instance learning, the learning algorithm operates on bags (classes), and the bag-level labels are used for training.

2.In traditional supervised learning, the focus is on predicting the label of individual instances. In contrast, multiple instance learning focuses on classifying bags based on the collective information from the instances within them.

Applications of Multiple Instance Learning

MIL finds applications in various domains where the training data can be naturally organized into bags. Some examples include:

1.Medical Imaging:

MIL has been used in medical imaging analysis, such as mammography and pathology. In breast cancer detection, for example, bags represent mammograms, and each bag contains multiple image patches (instances) from different regions of the mammogram. By learning from positive and negative bag labels, MIL algorithms can identify patterns associated with cancerous regions.

2.Object Recognition:

MIL has been employed in object recognition tasks, especially when dealing with images or videos containing multiple objects. Each bag represents an image or a video, and instances correspond to image patches or video frames. By considering bags as positive or negative based on the presence or absence of a particular object of interest, MIL algorithms can learn to recognize objects in complex scenes.

3.Drug Discovery:

In the field of pharmaceuticals, multiple instance learning has been used to identify potential drug candidates. Chemical compounds are represented as bags, while instances represent different conformations or descriptors of the compound. By labeling bags as active or inactive based on biological assays, MIL algorithms can discover compounds with desirable properties.

4.Text Classification:

MIL has been applied in text classification tasks, such as sentiment analysis and document categorization. Bags represent documents, while instances represent text snippets or segments. By labeling bags as positive or negative based on overall sentiment or category, MIL algorithms can learn to classify documents with ambiguous or incomplete labeling.

5.Video Surveilliance:

MIL has found applications in video surveillance systems, where bags represent videos or video clips, and instances correspond to frames or segments within the videos. By labeling bags as containing or not containing certain activities or events of interest, MIL algorithms can learn to detect specific behaviors or anomalies in surveillance footage.

MIL Advantages and Challenges:

Multiple Instance Learning offers several advantages, including the ability to leverage weakly labeled data, handle missing or noisy instance labels, and accommodate varying bag sizes. However, it also presents challenges, such as the potential loss of instance-level information and the need for specialized algorithms to handle bag-level classification.

Conclusion

Multiple Instance Learning provides a powerful framework for addressing supervised learning problems with incomplete or weakly labeled training data. By considering sets of instances as bags and learning from bag-level labels, Multiple instance learning is particularly useful when the individual labels of instances are not available or difficult/expensive to obtain. It allows us to learn from the collective behavior of instances within bags, which can be beneficial in scenarios where the presence of positive instances in a bag is indicative of the bag's positive label, even if the specific instances are unknown.