Open-Source Internship opportunity by OpenGenus for programmers. Apply now.

Reading time: 10 minutes

Graphics Processing Unit (GPU), Tensor Processing Unit (TPU) and Field Programmable Gate Arrays (FPGA)Field Programmable Gate Arrays (FPGA): are processors with a specialized purpose and architecture and are in the battle of becoming the best hardware for Machine Learning applications. We have compared these in respect to Memory Subsystem Architecture, Compute Primitive, Performance, Purpose, Usage and Manufacturers.

Usage

- Graphics Processing Unit (GPU): Graphics rendering, Machine Learning model training and inference, efficient for programming problem with parallelization scope, General purpose programming problem

- Tensor Processing Unit (TPU): Machine Learning model (only in TensorFlow) training and inference

- Field Programmable Gate Array (FPGA): General purpose programming problem, Can be designed specifically for a particular problem (like Machine Learning)

Performance

In computing intensive tasks like Machine Learning, performance is measured by latency that is the time taken to complete one unit of tasks.

Following are the best latency on each of the hardware for a general model like GoogleNet:

- FPGA: 1.5 millisecond

- GPU: 1.7 millisecond

- TPU: 0.9 millisecond

Thus, for Machine Learning workloads, TPU will perform much better than other platforms.

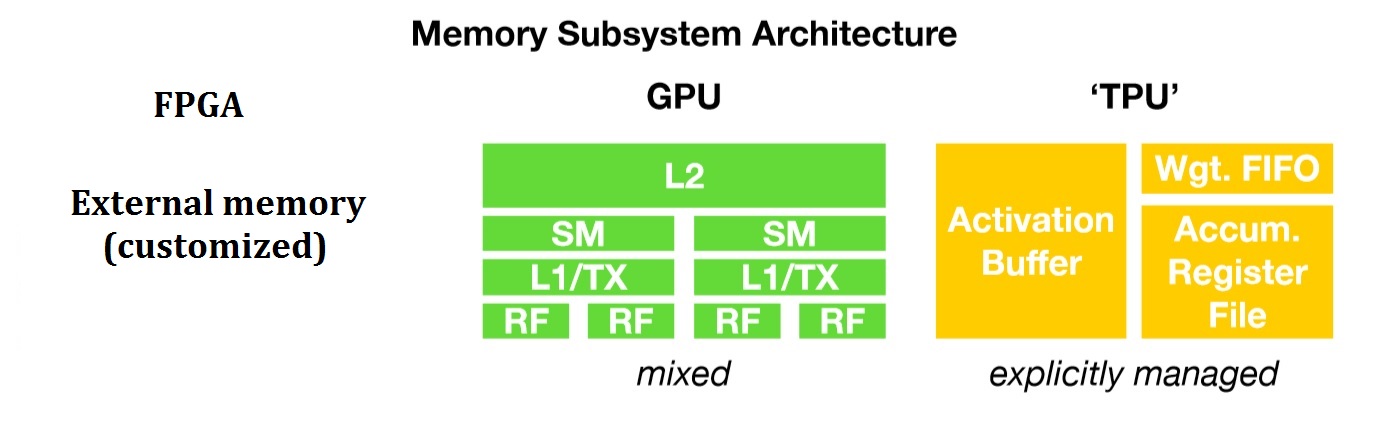

Memory architecture

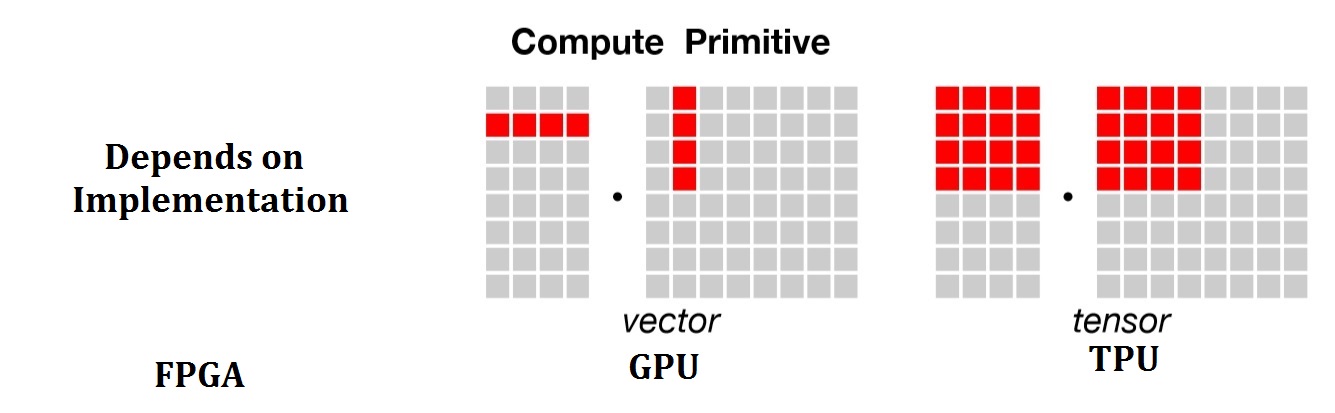

Compute primitive

Backing/ Support

All the three hardware alternatives are supported by Industry giants.

- GPU : Supported by NVIDIA + widely used

- TPU : Supported by Google

- FPGA : Supported by Microsoft, Amazon and Baidu

Manufacturers

- GPU : NVIDIA, AMD, Broadcom Limited, Imagination Technologies (PowerVR)

- TPU : Google

- FPGA : Intel, Xilinx

Conclusion

All the hardware platform can be used to perform the same set of computational tasks with different levels of implementation complexity and performance. That being said, FPGA can perform any task better than its counterparts but it may not be feasible for some machine learning tasks where TPU will beat it due to its unique and specific design.

In general, if one needs good performance on average, then FPGA is a good choice to go with.