Open-Source Internship opportunity by OpenGenus for programmers. Apply now.

In this article, we have covered Interview questions on Software Quality including QA, Software testing and much more.

1. Define Software Quality.

Software quality is measured by how well a product serves its intended purpose and complies with user expectations. Software that satisfies the specifications and/or expectations, is delivered on time and within the allotted budget, and is maintainable is considered to be of high quality. Software quality in the context of software engineering refers to both structural and functional quality. The suitability for usage of software products is typically defined in terms of compliance with the standards outlined in the SRS document.

2. What is Quality Assurance?

Quality Assurance (QA): The goal of quality assurance (QA) is to make sure that the generated software complies with all the requirements and specifications listed in the SRS document and will function as expected. In the context of software testing, quality assurance is referred to as a method for assuring that customers receive software goods or services or desired quality from a business. Quality assurance refers to any methodical procedure used to determine whether a good or service satisfies specified requirements (QA). With regard to the quality standards set forth for software products, quality assurance is focused with streamlining and improving the software development process. A proactive technique called quality assurance (QA) identifies ways to prevent errors during the software development cycle. Participants in the quality assurance (QA) phase of the software development life cycle include stakeholders, business analysts, developers, and testers (SDLC).

3. Explain McCall Quality Factors Model.

Over the years, various models of software quality factors and their classification into factor categories have been proposed. McCall proposed an 11-factor model for the traditional software quality factors. All software requirements are categorised into eleven software quality factors using McCall's factor model. The factors are broadly grouped into three categories:

Product Transition: This includes 3 software quality elements which enable the software to adapt to changing settings on a new platform or technology.

- Portability: The amount of work necessary to move a software from one platform to some other platform.

- Reusability: The degree to which the program's source code can be applied to other projects.

- Interoperability: The effort necessary to interlink two systems with each other.

Product Revision: It comprises of 3 software quality elements that are necessary for software testing and maintenance. They offer simple upkeep, adaptability, and testing effort to support the software's ability to work in accordance with user expectations and requirements in the future.

- Testability is the amount of work necessary to confirm that a piece of software satisfies the criteria.

- Flexibility: The effort required to enhance a working software system.

- Maintainability: is the amount of work needed to find and fix mistakes during the maintenance phase.

Product Operation: It consists of 5 software quality elements that are connected to the demands that directly impact how the software functions. These elements contribute to a better user experience.

- Reliability: The degree to which a piece of software carries out its intended tasks without error.

- Correctness: How closely a piece of software adheres to its requirements.

- Integrity: The degree to which the software can prevent someone who shouldn't be able to access the data or software from doing so.

- Efficiency: is the quantity of physical resources and software code required to carry out a task.

- Usability: How much effort is needed to use, operate, and comprehend a piece of software.

4. What are the components of SQA?

-

Pre-project components

This ensures that the project's commitment has been properly defined in terms of time estimation, client needs clarity, project budget as a whole, development risk assessment, and total workforce needed for that specific project. Additionally, it ensures that the quality and development strategies have been precisely outlined. -

Components of project life cycle activities assessment

This element consists of the review, professional judgements, software testing, and software maintenance elements. In contrast to the software maintenance life cycle, which focuses on development life cycle components and maintenance components for optimising maintenance tasks, the project development life cycle includes aspects like expert opinions, reviews, and identifying errors in software design and programming. -

Components of infrastructure error prevention and improvement

Based on the organization's cumulative SQA experience, this component covers personnel training, configuration management,certification, preventive and remedial procedures in order to lower the rate of software faults. -

Components of software quality management

In order to lower the risk of quality, schedule, and budget in the project, this class contains metrics for software quality, expenses for software quality, which involves introduction of managerial involvement and control of maintenance and development activities. -

Components of standardization, certification, and SQA system assessment

The primary goal of this course is to use professional information from around the world, which aids in the professional coordination of various organisations' quality systems. -

Organizing for SQA — the human components

The main goal is to assist and launch SQA efforts, identify their gaps and deviations, and propose changes. Supervisors, testers, as well as other SQA professionals who are interested in SQA are involved.

5. What is ISO 9000?

The International Organization for Standardization or ISO created and released the ISO 9000 family of standards. For the manufacturing and service industries, it defines, builds, and maintains an efficient quality assurance (QA) system. A collection of separate standards make up ISO 9000 such as:

ISO 9000 (2015): explains the terms and principles of the quality management system (QMS).

ISO 9001 (2015): introduces QMS

ISO 9004 (2018): provides advice on how to succeed in quality management over the long term.

ISO 19011 (2018): recommendations for auditing management systems are provided.

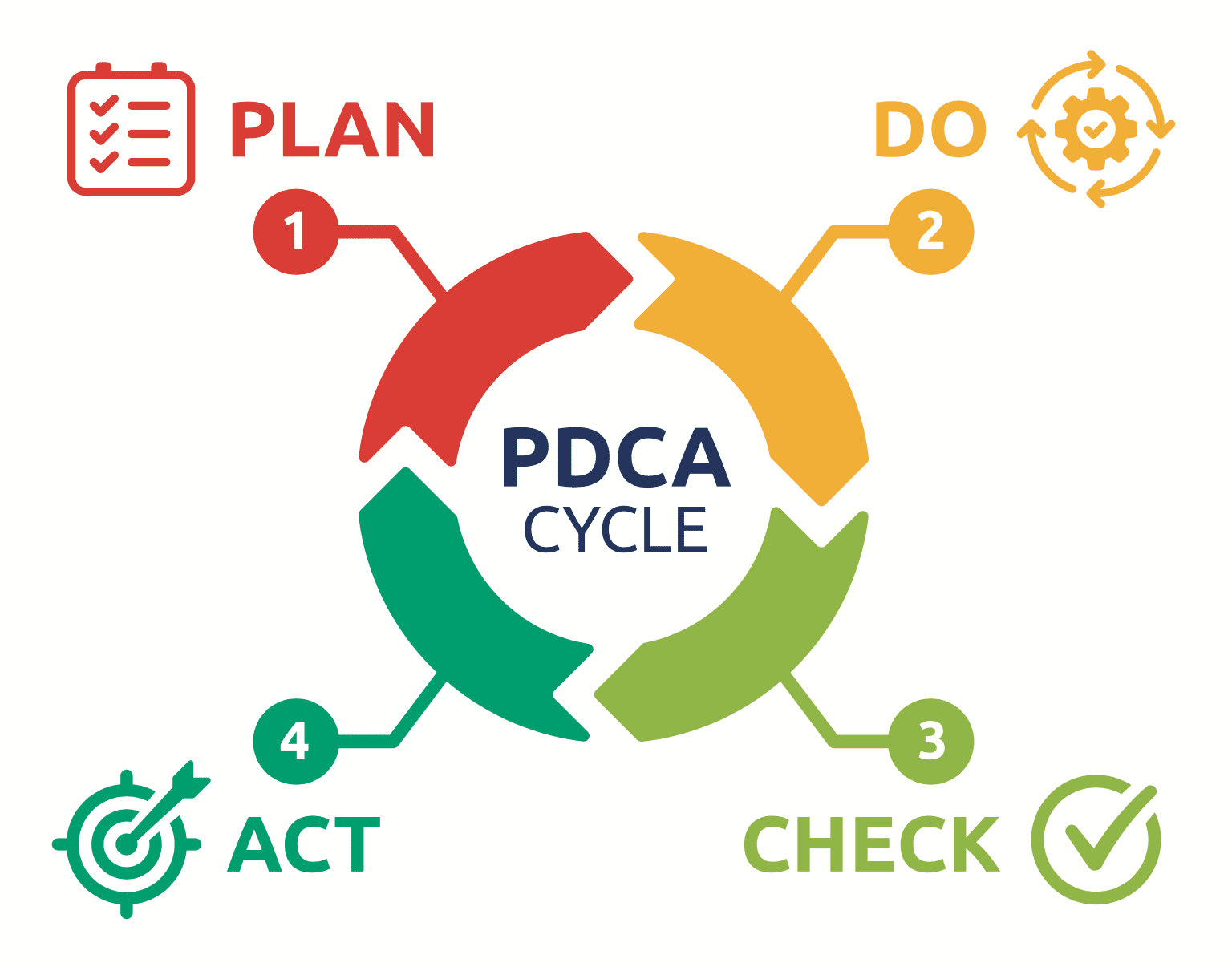

6. What is the lifecycle of a Quality Assurance Process?

The Plan Do Check Act (PDCA) cycle, also referred to as the Deming cycle, is followed at each step in the quality assurance process. The stages of this cycle are as follows:

-

Plan: The organisation decides the procedures, objectives and methods needed to create a high quality software product at the plan phase of the quality assurance process.

-

Do: Do is a stage in process development and testing. Here, it is ensured that the development procedures followed uphold the product's quality.

-

Check: During this stage, processes are examined to determine whether they satisfy user needs and the desired goals.

-

Act: The Act is a stage in which the measures necessary to enhance the processes are put into practise.

7. What is Software Testing? What are the phases involved in Software Testing?

Software testing entails assessing and confirming the functionality of a software product. In essence, it verifies that the software product does not have any defects and that it satisfies specified criteria. It can be argued that testing improves product quality by averting bugs, cutting down on development expenses, and minimising performance problems.

The term "Software Testing Life Cycle" refers to a testing procedure with particular phases that must be carried out in a specific order to guarantee that the quality objectives have been reached. The following are the phases of Software Testing Life Cycle:

-

Requirement Analysis: The requirements are examined and studied during this STLC phase. To determine whether the requirements are testable or not, brainstorming sessions are held with other teams. The scope of the testing is determined in this phase.

-

Test Planning: The most productive stage of the software testing life cycle is test planning, where all test strategies are laid out. In this phase, the testing team manager estimates the testing work's effort and expense, and he or she also identifies the resources and activities that will be most helpful in achieving the testing goals.

-

Test Case Development: During this stage, the testing team creates thorough test cases. The testing team also prepares the necessary test data. The quality assurance team reviews the test cases after they have been prepared.

-

Test Environment Setup: The test environment determines the parameters under which software is tested. The creation of test cases might be started concurrently. It is a software and hardware setup that the testing teams use to carry out test cases.

-

Test Execution: In this phase, the testing team begins running test cases that were prepared in the previous step. It involves running the code and evaluating the desired and obtained results.

-

Test Closure: This stage of the STLC is the last one in which the testing process is examined. It entails holding a meeting of the testing team members and assessing the cycle completion criteria in light of the test coverage, quality, cost, and time constraints as well as the software and important business objectives.

8. What are the different levels of testing?

The following are the four levels of testing in software testing:

-

Unit Testing: The smallest software component that can be tested is a unit. This form of testing examines each component or unit of the software separately to check that it satisfies user expectations and detects defects from each one individually.

-

Integration Testing: In this testing, two or more tested units are combined to see if the combined modules function as expected or not.

-

System Testing: All of the system components that make up the system are tested collectively during system testing, which involves testing entire and integrated software.

-

Acceptance Testing: It is carried out to make sure that the users' requirements are met prior to delivery and that the software functions properly in the user's working environment.

9. What are the different methods of software testing?

-

Black-box testing: It is a testing approach that only relies on specifications and requirements. This approach does not call for any understanding of the internal structures, routes, or implementation of the software under test.

-

White-box testing: It is a testing method based on the internal code, implementation, and implementation routes of the software under test. White box testing typically calls for in-depth programming abilities.

-

Grey-box testing: When using the grey box testing technique to debug software, the tester has little access to the program's internal workings.

10. What are some different types of software testing?

Here are a few types of software testing:

-

Regression Testing: Makes sure that current features are not damaged by recent code modifications.

-

System Testing: To ensure that the entire system functions as planned, comprehensive end-to-end testing is performed on the entire software.

-

Smoke Testing: A brief test to verify that the software starts up successfully and functions at the most fundamental level. Its term stems from hardware testing, which involves simply plugging in the gadget and checking to see whether smoke comes out.

-

Performance Evaluation: Verifies that the software operates as expected by the user by examining the throughput and response time under particular load and conditions.

-

Usability Testing: Software usability is evaluated through usability testing. This is done with a sample group of end customers who use the product and give comments on how simple or difficult it is to use.

-

Security Testing: Testing for security is more crucial than ever. In order to access private information, security testing aims to breach software security safeguards. For web-based applications or other applications involving money, security testing is essential.

11. What are functional and non-functional testing?

Functional testing is a type of software testing that compares the system to its functional specifications and requirements. Functional testing ensures that the programme satisfies all requirements laid out in the specification requirement sheet. The ultimate result of the procedure is the main emphasis of this type of testing. It doesn't make any structural assumptions and instead concentrates on replicating actual system usage. Example: Unit testing, Integration testing, System Testing, Acceptance Testing, Regression Testing, etc.

Non-functional testing is a type of software testing that confirms the non-functional requirements of the programme. It establishes whether the system is acting in a manner that complies with the standards. It looks at every facet that functional testing doesn't touch. Example: Performance testing, Load Testing, Stress testing, Volume testing, Security testing, Installation testing, Recovery testing, etc.

12. Difference between Verification and Validation in Software Testing?

Validation: Validation is a procedure that involves running software products for dynamic testing. This method verifies whether or not we are creating the appropriate software to satisfy the user requirement. System testing, integration testing, user acceptance testing, and unit testing are just a few of the different tasks involved.

Verification: Verification is described as a procedure that includes document analysis. This procedure checks to see if the software complies with the requirements. Its ultimate objective is to guarantee the excellence of software products, architecture, design, etc.

| Verification | Validation |

|---|---|

| Verification is the technique of assessing development-phase procedures to see if they adhere to user requirements. | After the development phase, the product is evaluated to see if it satisfies the requirements. This is known as validation. |

| Static testing | Dynamic testing |

| Execution of the code is not involved. | Execution of the code is involved. |

| It entails tasks including desk checks, walkthroughs, inspections, and reviews. | It uses techniques including non-functional testing, white box testing, and black box testing. |

| It is carried out prior to the validation procedure. | It is carried out after the verification procedure. |

13. What is the difference between the QA and software testing?

The role of QA (Quality Assurance) is to monitor the quality of the “process” used to produce the software. While the software testing, is the process of ensuring the functionality of final product meets the user’s requirement.

| Quality Assurance | Testing |

|---|---|

| A series of procedures are used to guarantee that the software generated satisfies all user requirements. | Software testing is a task carried out after the development stage to make sure the software is error-free by examining if the actual results match the anticipated ones. Software testing, in a nutshell, is the verification of the application that is being tested. |

| It entails actions such as the application of standards, procedures, and processes. | It entails checking and testing, among other things. |

| It is process-oriented, meaning it checks the processes to make sure the client receives high-quality software. | It is product-oriented, checking a software's functioning. |

| Delivering high-quality software is the primary goal of quality assurance. | Finding faults in the generated software is the major goal of software testing. |

| It is preventive(attempts to prevent defects from existing in the product). | It is corrective(attempts to fix a defect that exists in the product). |

14. What are the different types of testing documents

-

Requirement Document: It lists all the functionalities that need to be introduced to the application in terms of requirements. Developers, business analysts, testers, and other members of the project team worked together to create this requirements document.

-

Test Metrics: The performance and quality of the test plan are assessed quantitatively using test metrics.

-

Test plan: It outlines the approach that will be taken to test a program, the tools that will be employed, the testing environment that will be used, and how test operations will be scheduled.

-

Test cases: A set of procedures and conditions used during testing is known as a test case. This task is carried out to check whether or not all of the software's functionalities are operating as intended. There are many different sorts of test cases, including logical, physical, functional, negative, error, user interface, and more.

-

Traceability matrix: A traceability matrix is a table that links test cases with user requirements. Requirement Traceability Matrix's primary goal is to ensure that all test cases are included in order to ensure that no functionality is overlooked while conducting software testing.

-

Test scenario: A test scenario is a group of test cases that aids the testing team in identifying the advantages and disadvantages of a product.

15. What is a bug?

A software bug is a programming mistake that leads to inaccurate outcomes. A software tester examines the application to look for faults.

The flaws might be caused by a variety of factors, such as subpar design, shoddy programming, an absence of version control, or improper communication. Several problems are introduced into the system by developers as they work on it. Finding those bugs is the tester's objective.

Regardless of how they are discovered, all bugs are entered into a bug tracking system. A software developer is given the task of fixing the bug after a triage team prioritises it and triages the bugs. Once the issue has been fixed, the developer checks the code in and marks the bug as being ready for testing. When a bug is fixed and ready for testing, the tester receives it and runs tests on the software to see if the bug has been resolved. If so, it has been closed. If not, they give it to the original developer and specify how to reproduce the bug precisely. Popular bug-tracking software examples include FogBugz, BugZilla, etc.

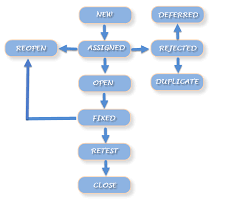

16. What is a bug life cycle?

A bug goes through a certain set of phases during its life cycle. This is called the bug life cycle or the defect life cycle.

- New: The status of a new defect is set to New when it is initially logged and posted.

- Assigned: After the tester posts a bug, the lead tester reviews the bug and designates it for the developing team.

- Open: The developer begins analysing the defect and works on its fix.

- Fixed: A developer may mark an issue as fixed once the required code modifications have been made and verified.

- Retest: At this point, the code is retested by the tester to determine if the developer has repaired the issue or not and to modify the status to retest.

- Reopen: Once the developer has resolved the bug, if the bug still exists, the tester switches the status to Reopen, and the bug runs through the life cycle once more.

- Verified: After the developer has corrected the bug, the tester retests it; if no bugs are discovered, the status is changed to Verified.

- Closed: The status is changed to Closed if the bug is no longer present.

- Duplicate: The status is changed to Duplicate if the defect occurs twice or if it shares the same concept as the prior problem.

- Rejected: The status is changed to Rejected if the developer believes the flaw is not actually there.

- Deferred: If the bug can be fixed in the upcoming release and does not have a higher priority, the status becomes Deferred.

17. What do you understand by bug leakage and bug release?

-

Bug Leakage: A bug leakage happens when an end user finds a bug that should have been caught in earlier builds or versions of the application. A bug that exists during testing but is not found by the tester and is later found by the end-user is referred to as a bug leak.

-

Bug Release: A bug release is when a certain version of the product is made available with a number of known faults or defects (s). These kinds of bugs usually have low priority and/or severity. This is carried out when the company can afford to have a bug in the released software rather than spending the time and money to fix it in that version. These bugs are typically mentioned in the release notes.

18. What are the five common solutions for software developments problems?

The software development issues has five different solutions.

- Clear, comprehensive, and widely accepted requirements should be established before beginning software development.

- The next step is creating a realistic schedule that allows for planning, designing, testing, bug fixes, and additional testing.

- It needs enough testing, and it starts testing as soon as one or more modules are developed.

- Using tools for group communication.

- During the design phase, use quick prototyping to make it simple for the customer to understand what to expect.

19. Analysis of performance metrics.

Performance metrics are measurements used to evaluate the efficiency and effectiveness of a software system. These metrics are used to assess how well the software system is able to perform its intended functions, such as responding to user requests, processing data, and managing resources. Some common performance metrics include:

-

Response time: a measure of the time it takes for a software system to respond to a user request or input.

-

Throughput: a measure of the number of requests or inputs that a software system can handle in a given period of time.

-

Resource utilization: a measure of how efficiently a software system is using resources such as CPU, memory, and disk space.

-

Memory usage: a measure of how much memory a software system is using at any given time.

-

CPU usage: a measure of how much CPU time a software system is using at any given time.

-

Disk I/O: a measure of the rate of data transfer to and from disk storage.

-

Network usage: a measure of the rate of data transfer over a network connection.

-

Latency: a measure of the delay between a user request and the software system's response.

-

Error rate: a measure of the number of errors that occur during the execution of the software system.

-

Resource consumption: a measure of the consumption of resources by a software system, such as memory, disk space, CPU time, and network bandwidth.

These metrics can be used to evaluate the performance of a software system during different stages of its lifecycle, such as development, testing, and production. Performance metrics can be used to identify bottlenecks and to optimize the performance of a software system. They can also be used to track the progress of a software system over time and to identify potential issues that may arise in the future.

20. Explain Capability Maturity Model Integration (CMMI).

Capability Maturity Model Integration (CMMI) is a process improvement framework that provides organizations with a model for improving their processes and performance. It is a collection of best practices that can be used to improve an organization's ability to manage and deliver products and services.

CMMI provides a structure for evaluating and improving an organization's processes in a number of areas, including project management, engineering, and service management. The model is composed of five maturity levels that organizations can achieve, from "Initial" to "Optimizing." The maturity levels reflect the degree to which an organization's processes are defined, managed, measured, and controlled.

The CMMI model is designed to be flexible and can be tailored to meet the specific needs of an organization. It can be used in a variety of industries, including software development, information technology, and manufacturing. Organizations can use CMMI to improve the quality of their products and services, to reduce costs, and to increase customer satisfaction.

The CMMI model is widely used by organizations around the world, and is supported by the CMMI Institute, a division of Carnegie Mellon University. Organizations can be appraised against the CMMI model by authorized appraisal teams and can obtain an official rating of their level of maturity.

The CMMI framework can be implemented in different ways, such as continuous representation, staged representation, and specific goal representation. Continuous representation is tailored to the specific needs of an organization, the staged representation is a step by step approach and the specific goal representation is focused on specific goals of the organization. The CMMI model can be used to improve the processes and performance of an organization, and to achieve better results through a more structured and disciplined approach to process improvement.