Open-Source Internship opportunity by OpenGenus for programmers. Apply now.

Reading time: 30 minutes

A graphics processing unit (GPU) is a processor like CPU and TPU for faster graphics processing. Specifically, it designed to rapidly manipulate and alter memory to accelerate the creation of images in a frame buffer to be displayed on a screen.

The parallel structure of a GPU makes it more efficient for algorithms where several components can be executed in parallel such as Machine Learning algorithms/ inference.

In this article, we have explored some of the basic architecture concepts in Graphics Processing Unit (GPU).

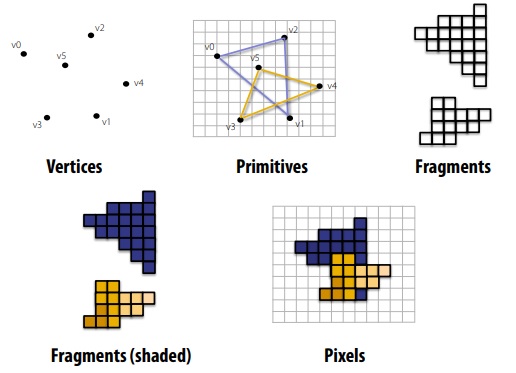

Graphics Pipeline

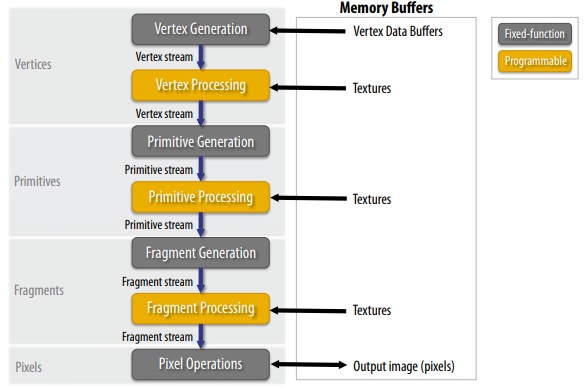

This image summaries the graphics pipeline in a GPU:

You need to understand the following ideas:

- Vector processing

- Primitive Processing

- Rasterization

- Fragment processing

- Pixel operations

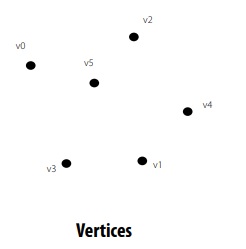

Vector processing

Each vector is transformed in screen space and processed independently.

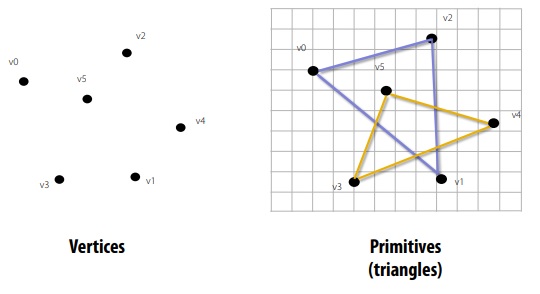

Primitive Processing

The vertices are organized into primitives that are clipped.

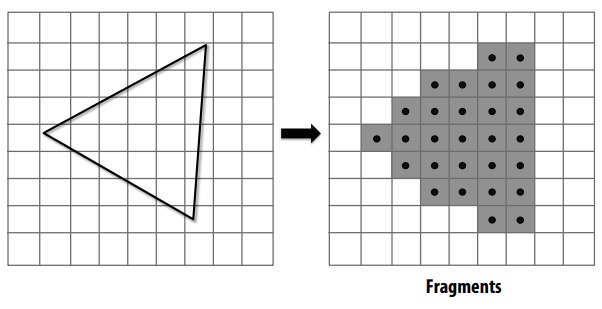

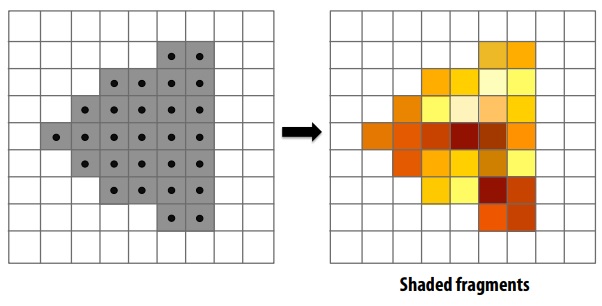

Rasterization

Primitives are rasterized into “pixel fragments”. Each primitive is rasterized independently.

Fragment processing

Fragments are shaded to compute a color at each pixel. Each fragment is processed independently.

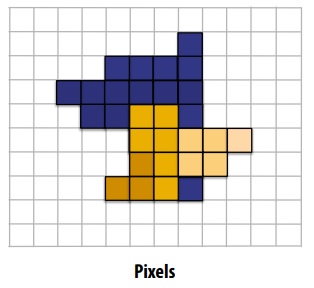

Pixel operations

Fragments are blended into the frame buffer at their pixel locations (z-buffer determines visibility).

There are five pipeline entities:

- Vertices

- Primitives

- Fragments

- Shaded fragments

- Pixels

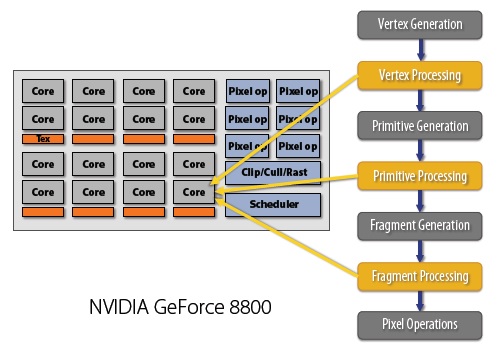

Graphics architectures

In this section, we will take a look at how the Graphics pipeline is implemented and how the independent nature of the operations are maintained.

This image demonstrates the architecture of NVIDIA GeForce 8800 developed in 2006:

The above architecture uses the concept of unified shading which is a significant improvement over the basic CPU architecture and is one of the secrets of GPU's speed.

Shader programming model

Shader programming model follows the following ideas:

-

Fragments are processed independently but there is no explicit parallel programming

-

Independent logical sequence of control per fragment.

Shader programming and improvements in this field gives GPU an enormous boost in speed. Check out this article to understand the intuition behind Shader Programming in CPU